Physical Intelligence has unveiled π0.7, a new foundation model for robotics that demonstrates zero-shot task generalisation — executing unfamiliar tasks without task-specific training. If the capability holds up at scale, it signals a fundamental shift in how robot intelligence is valued, priced, and deployed across industrial and commercial automation.

What is π0.7 and What Can It Do?

π0.7 is a foundation model for robot control developed by Physical Intelligence (π), a San Francisco-based robotics AI startup. The model is designed to act as a general-purpose "brain" that can direct robotic hardware through novel tasks — tasks it was never explicitly trained to perform — by reasoning from prior experience and contextual understanding.

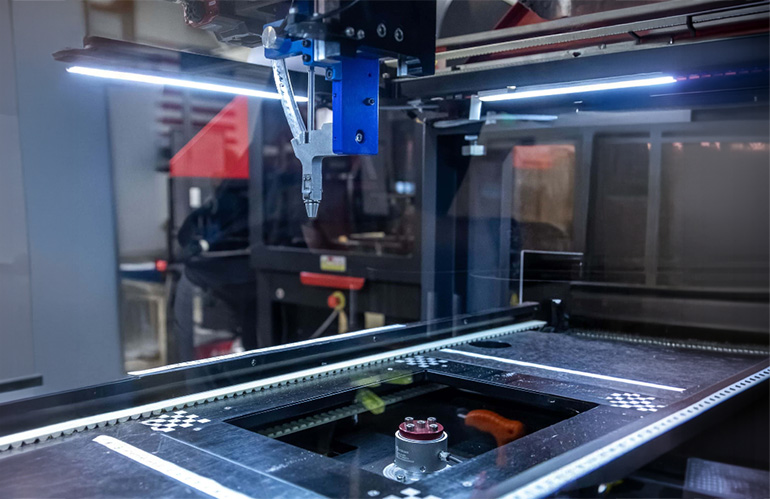

This is the capability the robotics industry has been chasing for a decade. Today's deployed robots — whether a cobot on an assembly line or a logistics arm in a fulfilment centre — are almost universally task-specific. They perform one job exceptionally well and fail immediately when conditions change. π0.7's claim is that it can generalise: given a new object, a new arrangement, or an unfamiliar instruction, the model figures it out.

According to TechCrunch, Physical Intelligence describes π0.7 as "an early but meaningful step" toward a general-purpose robot brain — careful language that signals both genuine progress and honest acknowledgment of how far the field still has to travel.

How Does Zero-Shot Generalisation Work in Robotics?

Zero-shot generalisation means the model can successfully complete a task it has never seen during training, using only reasoning and pattern transfer from related experiences. In robotics, this is extraordinarily difficult because physical interaction requires real-time sensorimotor control — the model must translate abstract understanding into precise, continuous motor commands under physical constraints.

The dominant paradigm in robotics AI today is imitation learning and reinforcement learning on narrow task distributions: feed a robot thousands of demonstrations of a specific action, and it learns to replicate that action reliably. This works, but it creates brittle, single-purpose systems. Every new task means a new training run, new data collection, and significant engineering overhead.

Foundation models like π0.7 take a different approach. Rather than training for one task, the model is trained across a wide distribution of tasks and embodiments, building a generalised representation of how physical manipulation works. Think of it like the difference between a tradesperson trained to install only one brand of fixture versus one trained across building systems who can adapt to whatever a job site requires. The analogy breaks down at the level of precision — robot manipulation requires sub-centimetre accuracy that broad training alone cannot guarantee — but the architectural philosophy is the same.

Physical Intelligence is not alone in pursuing this direction. Google DeepMind's RT-2, Figure AI's neural architectures, and 1X Technologies' training pipelines all reflect the same bet: that large-scale, diverse training data produces more deployable robots than narrow specialisation ever could.

How Does π0.7 Compare to Current Specialised Robot Systems?

The practical gap between π0.7's claimed capabilities and the robots currently deployed in industry is significant — and instructive. Here is how the two paradigms stack up across the dimensions that matter most to buyers and system integrators:

| Capability | Specialised Robots (current) | π0.7 / Foundation Model Approach |

|---|---|---|

| Task flexibility | Single-task or narrow task family | Multi-task, zero-shot generalisation claimed |

| Setup time for new task | Days to weeks (retraining + validation) | Potentially hours (natural language instruction) |

| Reliability on trained tasks | Very high (>99% in controlled conditions) | Still maturing — generalisation trades peak reliability |

| Data requirement | Hundreds to thousands of task demos | Large pre-training corpus; few-shot at deployment |

| Hardware compatibility | Tuned to specific hardware | Designed to transfer across robot embodiments |

| Commercial readiness | Fully production-deployed | Research/early commercial stage |

The tradeoff is stark. Specialised systems win on reliability for their trained task. General-purpose models win on adaptability. In most industrial deployments today — welding, palletising, pick-and-place — reliability is the dominant requirement, and specialised cobots and industrial arms remain the rational choice. You can browse used industrial robots for sale on Botmarket to see the current commercial landscape.

But the calculus changes the moment a facility needs to run more than a handful of task types, or when product lines change frequently. That is where π0.7's value proposition becomes genuinely competitive.

What Are the Limitations of π0.7?

Zero-shot generalisation in robotics is real but still bounded — and Physical Intelligence's own framing acknowledges this.

The first constraint is reliability degradation on novel tasks. A model that generalises across tasks necessarily sacrifices some peak performance on any individual task. In safety-critical or high-throughput industrial settings, even a small drop in success rate translates directly to downtime and cost. This is not a criticism specific to π0.7 — it is a fundamental tension in the generalisation-versus-specialisation tradeoff.

The second constraint is physical grounding. Language and vision models generalise well because their outputs are soft — a slightly imprecise sentence is still comprehensible. Robot manipulation is not forgiving in the same way. A grasping trajectory that is two centimetres off drops the object. Foundation models for robotics must therefore solve a harder problem than their language counterparts, and the engineering margin for error is far smaller.

The third is hardware dependency. π0.7 is trained to transfer across embodiments — different robot bodies and actuator configurations — but real-world deployment still requires calibration and integration work. The vision of a single model running on any robot remains aspirational for now.

Finally, there is the data question. Foundation models require enormous, diverse training datasets. Physical Intelligence has been collecting robot interaction data at scale, but the robotics data ecosystem is far less mature than internet-scale text or image corpora. This is a constraint the entire field shares, not a flaw unique to this model.

What This Means for Robotics Buyers and Automation Engineers

For buyers evaluating robotic systems today, π0.7 does not replace the robots currently on the market — but it reframes the purchasing decision in a way that matters.

In the near term (12-24 months), the practical advice is unchanged: if you need a robot to do one job reliably, a purpose-built cobot or industrial arm remains the right tool. The reliability, safety certification, and vendor support of established hardware are not things a research-stage AI model can replicate yet. If you are evaluating options, used cobots for sale on Botmarket offer a cost-effective entry point for single-task automation.

In the medium term (2-4 years), buyers should start asking vendors a new question: what is the upgrade path for AI capabilities? Hardware procured today will still be operational when foundation model-based control becomes commercially viable. Robots with open software architectures and standard compute interfaces will be far easier to upgrade than closed, proprietary systems.

For system integrators, the implication is more immediate. The value-add in robotics integration is shifting. Programming and task-specific tuning — historically the core of integration work — becomes less differentiating as models like π0.7 mature. The competitive advantage moves toward deployment expertise, data infrastructure, and the ability to validate generalised AI behaviour in safety-critical settings.

The pricing frontier is where this gets strategically interesting. Today, robot intelligence is priced by task: each new capability requires a new software package or custom integration. If general-purpose models commoditise task programming, the value concentrates in the model layer and in the hardware that best supports it. That is a structural shift that will reshape vendor economics across the entire robotics supply chain.

Frequently Asked Questions

π0.7 is a foundation model for robot control developed by Physical Intelligence, a robotics AI startup. It is designed to enable robotic systems to perform tasks they were never explicitly trained on — a capability known as zero-shot generalisation — representing an early step toward a general-purpose robot brain.

What does zero-shot mean in robotics AI? Zero-shot task completion means a robot can successfully execute a new, unfamiliar task without any task-specific training demonstrations. The model draws on patterns learned during broad pre-training to reason through novel physical interactions, rather than requiring hundreds of new examples for each task variation.

Is π0.7 commercially available? As of the announcement, π0.7 is described as an early-stage research and development milestone, not a fully commercial product. Physical Intelligence is backed by significant venture capital and working toward commercial deployment, but production-ready availability timelines have not been publicly specified.

How does π0.7 affect the value of specialised industrial robots? In the near term, specialised cobots and industrial arms retain their advantage in reliability and commercial readiness for defined tasks. Over a 3-5 year horizon, general-purpose models could reduce the cost and time of deploying robots across new tasks, which may shift competitive value toward flexible hardware platforms and model-layer providers.

Which other companies are building general-purpose robot AI? The field includes Google DeepMind (RT-2 and subsequent models), Figure AI, 1X Technologies, Agility Robotics, and Boston Dynamics, all of which are investing in foundation model approaches or neural policy architectures aimed at broader task generalisation across robotic platforms.

Physical Intelligence's π0.7 represents the clearest public signal yet that the robotics industry's centre of gravity is moving from task-specific programming toward generalised AI reasoning applied to physical systems. The technology is early, the limitations are real, and the commercial timelines are uncertain — but the architectural direction is set. The question for every buyer, integrator, and vendor in the robotics market is no longer whether general-purpose robot intelligence is coming, but how fast, and whether their current infrastructure is positioned to absorb it.

Is your facility's robot hardware architecture ready to integrate foundation model AI — or are you locked into proprietary systems that will require full replacement?

Gå med i diskussionen

Is your current robot hardware architecture flexible enough to run foundation model AI — or will you need to replace it?