Autonomous vehicles and robots generate more sensor data than most organisations can actually use. Nomadic has raised $8.4 million in seed funding to fix that — building an infrastructure layer that converts raw AV and robot footage into structured, searchable datasets using deep learning, addressing a bottleneck that quietly limits the pace of autonomous systems development across the industry.

What Does Nomadic Actually Do?

Nomadic is building a data infrastructure platform that transforms raw video and sensor footage captured by autonomous vehicles and robots into structured, queryable datasets. Instead of raw footage sitting in storage — expensive to keep, nearly impossible to search — Nomadic's system uses deep learning models to tag, classify, and index that data so engineers can actually find what they need.

According to TechCrunch, the $8.4 million seed round positions Nomadic as infrastructure for the broader Physical AI stack — not just for AV programmes, but for any robotic system generating continuous sensor streams that need to be turned into training signal.

Think of it like the difference between a warehouse full of unlabelled boxes and a fully indexed inventory system. The footage exists either way, but only one version is operationally useful. That analogy does break down at scale — the problem with AV data isn't just labelling, it's the sheer volume combined with the cost of human annotation and the sparsity of safety-critical edge cases buried inside hours of routine footage.

Why Is AV and Robot Data So Hard to Manage?

A single autonomous vehicle can generate between 1 and 40 terabytes of raw sensor data per day, depending on its sensor suite — cameras, LiDAR, radar, IMU. A small fleet of ten vehicles running continuous operations produces more data per week than most enterprise data pipelines were designed to handle.

The problem compounds in two directions. First, storage costs accumulate fast when petabyte-scale data must be retained for model training, safety audits, and regulatory review. Second, and more importantly, most of that data is operationally inert — it can't be queried, filtered, or surfaced without significant manual labelling effort.

For robotics teams specifically, this creates a painful feedback loop:

- Deploy robots in the field

- Collect enormous volumes of sensor data

- Struggle to extract the specific failure scenarios, edge cases, or domain-specific events needed to improve the model

- Training iteration slows

- Deployment performance stagnates

Human annotation workflows — the traditional solution — don't scale economically. Labelling costs for autonomous driving datasets have historically run between $0.05 and $0.50 per frame, and a single hour of video at 30fps contains 108,000 frames. The economics actively discourage teams from leveraging the full data exhaust of their fleets.

How Does Nomadic's Deep Learning Approach Work?

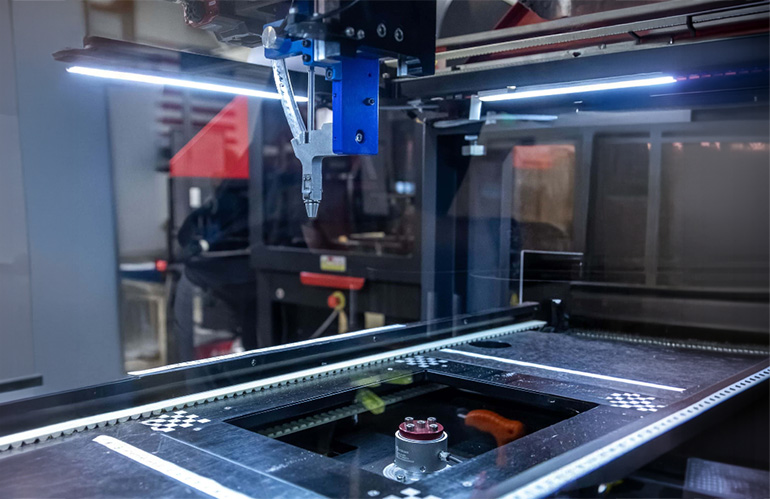

Nomadic's core system applies deep learning models to raw footage to automatically extract semantic structure from sensor streams. Rather than requiring engineers to manually label footage before it becomes searchable, the platform infers what is happening in a scene, tags events and objects, and organises the output into queryable form.

The practical implication is significant: robotics and AV teams can issue natural-language or structured queries — "show me all instances where the vehicle approached a pedestrian at under 2 metres in rain" — and surface relevant clips from millions of hours of footage without manual review.

This approach mirrors what modern vector databases do for unstructured text, but applied to multimodal sensor data including video, point clouds, and IMU streams. The deep learning model acts as an automatic annotation layer, dramatically reducing the cost per labelled example while increasing the density of extractable signal from existing data.

Nomadic vs. Traditional Data Pipeline Approaches

| Approach | Annotation Cost | Query Speed | Scalability | Edge Case Recall |

|---|---|---|---|---|

| Manual human labelling | High ($0.05–$0.50/frame) | Slow | Poor | Dependent on reviewer |

| Rule-based auto-tagging | Low | Fast | Moderate | Misses novel events |

| Nomadic deep learning | Low–Medium | Fast | High | Strong on trained categories |

| No pipeline (raw storage) | None | None | High (cost) | Zero |

The caveat worth noting: deep learning-based annotation inherits whatever blind spots exist in the model's training distribution. For rare, safety-critical edge cases — exactly the events most valuable for training — a model that hasn't seen enough examples may still fail to surface them reliably. Nomadic's long-term value proposition likely depends on how well its models generalise across diverse robot and vehicle deployments.

What This Means for Robotics and Automation

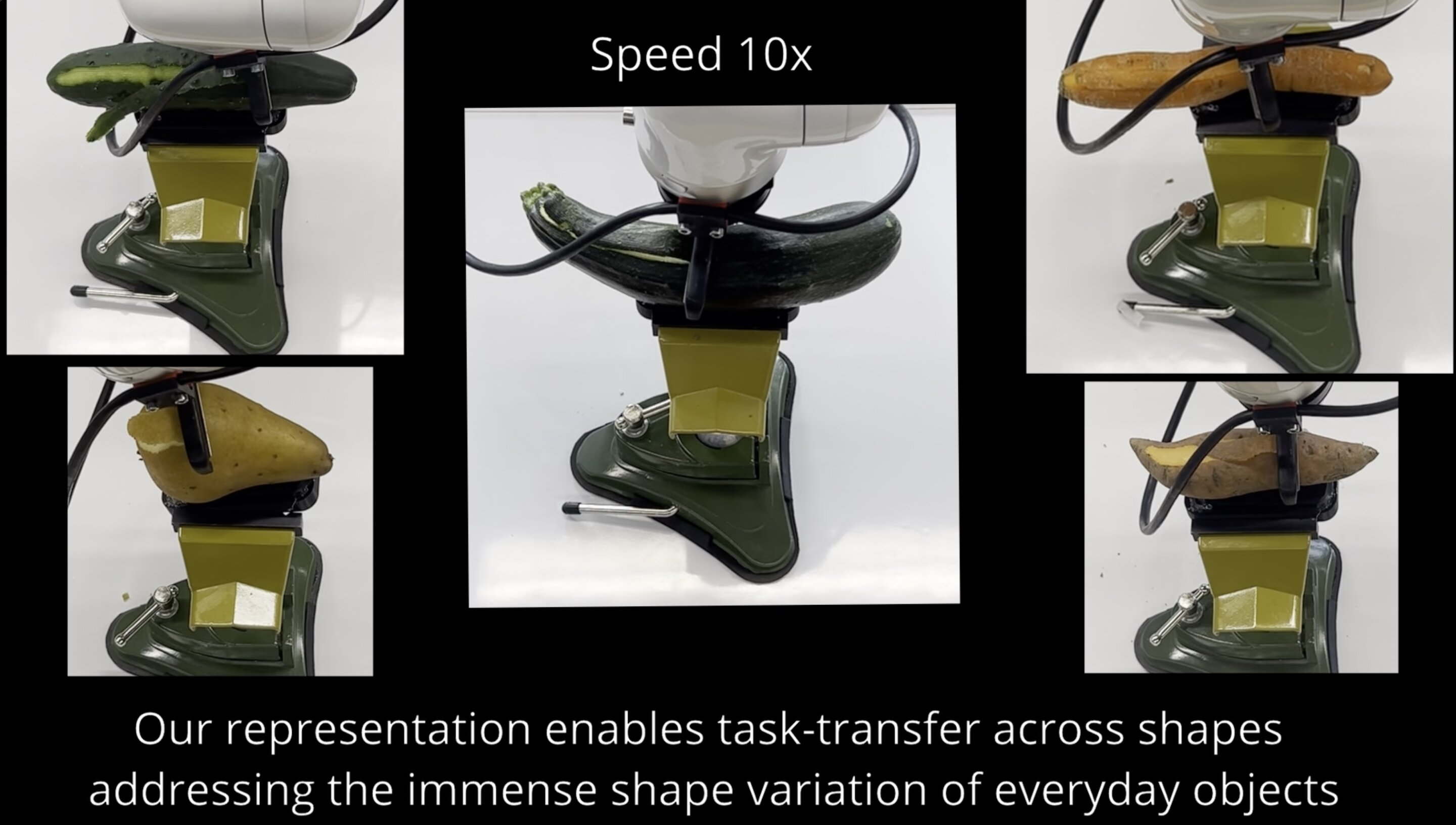

The data bottleneck Nomadic is attacking isn't unique to autonomous vehicles. It is the same problem facing warehouse AMRs (autonomous mobile robots), industrial inspection robots, agricultural automation systems, and humanoid robot programmes — any embodied AI system that generates continuous perceptual data in the real world.

For teams operating or procuring robot fleets, this matters in two concrete ways.

Training velocity: The rate at which a robotic system improves is directly constrained by how fast teams can extract meaningful training signal from deployment data. Infrastructure that accelerates that loop — even by a 2–3× factor — compresses the improvement timeline proportionally.

Fleet intelligence at scale: As robot fleets grow, the operational value of that sensor data extends beyond model training. Structured data unlocks anomaly detection, predictive maintenance signals, and performance benchmarking across units — turning the robot fleet itself into a continuously self-documenting system.

For operators considering used or refurbished robot deployments — where sensor configurations may vary and pre-existing datasets are less curated — platforms like Nomadic become particularly relevant. Feeding field data from used industrial robots back into structured training pipelines has historically been a manual, expensive process. Automated structuring infrastructure changes that calculus.

The $8.4 million seed figure also signals where infrastructure investment is flowing in the Physical AI stack. Hardware — the robots themselves — gets the attention. But the data layer between deployment and model improvement is increasingly where competitive advantage is built and where capital is beginning to concentrate.

Operators evaluating used cobots for sale or building out small-scale automation programmes should factor data pipeline costs into total cost of deployment — a question Nomadic is directly positioning itself to answer.

Frequently Asked Questions

Nomadic is a data infrastructure company that uses deep learning to convert raw sensor footage from autonomous vehicles and robots into structured, searchable datasets. It solves the scaling problem of autonomous systems data — where vast volumes of footage are generated in the field but remain operationally unusable without expensive manual annotation.

How much data does an autonomous vehicle generate per day?

A single autonomous vehicle typically generates between 1 and 40 terabytes of raw sensor data per day, depending on its camera, LiDAR, and radar configuration. A fleet of ten vehicles can accumulate hundreds of terabytes weekly, making manual processing economically untenable at scale.

How does Nomadic's deep learning approach differ from manual labelling?

Manual labelling costs between $0.05 and $0.50 per frame, making it prohibitively expensive at fleet scale. Nomadic applies deep learning models to automatically tag and index footage, enabling engineers to query across large datasets without frame-by-frame human review — significantly reducing annotation cost and time-to-insight.

Does the data bottleneck problem affect robots beyond autonomous vehicles?

Yes. Any embodied AI system — warehouse AMRs, inspection robots, agricultural automation, humanoid platforms — generates continuous sensor data that faces the same structuring and retrieval challenges. The problem scales with fleet size and operational hours regardless of the specific robotic application.

What does this funding mean for the broader Physical AI ecosystem?

The $8.4 million seed round signals growing investor recognition that the data infrastructure layer — not just hardware or core AI models — is a critical bottleneck in autonomous systems development. Infrastructure investment in data pipelines is a leading indicator of maturing Physical AI deployment programmes.

The data exhaust from autonomous systems has always been enormous. The missing piece has been the infrastructure to turn it into usable signal. Nomadic's approach — applying deep learning as an automatic structuring layer — addresses a constraint that affects every organisation deploying robots or vehicles at scale. The seed funding won't solve the problem overnight, but it marks a clear directional bet that the data layer is where the next competitive edge in Physical AI gets built.

Is data pipeline infrastructure the bottleneck limiting your robot fleet's improvement — or is the hardware still the constraint?

Sumali sa diskusyon

Is data pipeline infrastructure the bottleneck limiting your robot fleet — or is hardware still the constraint?