LG is exploring a strategic partnership with NVIDIA to tackle the computational demands of physical AI, from data center cooling to edge inference for consumer robots. The discussions spotlight the zero-latency processing pipeline required to make dexterous home assistants like LG’s CLOiD robot commercially viable, underscoring the massive infrastructure investment needed to move autonomous systems from simulation into real homes.

- How does edge inference impact home robots like LG CLOiD?

- Why are data center cooling solutions critical for physical AI?

- What role do digital twins play in training consumer robots?

- What This Means for Robotics Buyers

- Frequently Asked Questions

How does edge inference impact home robots like LG CLOiD?

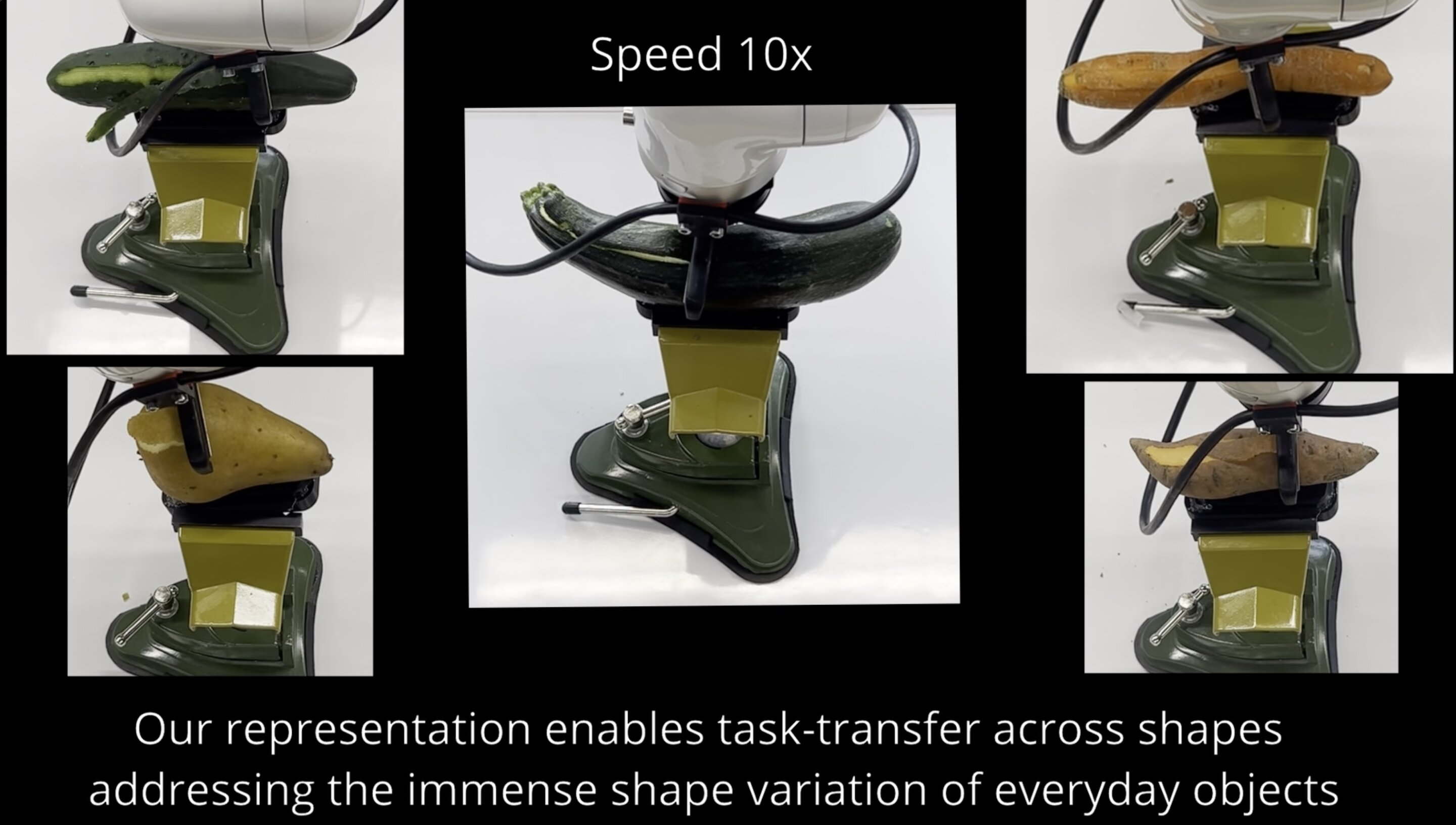

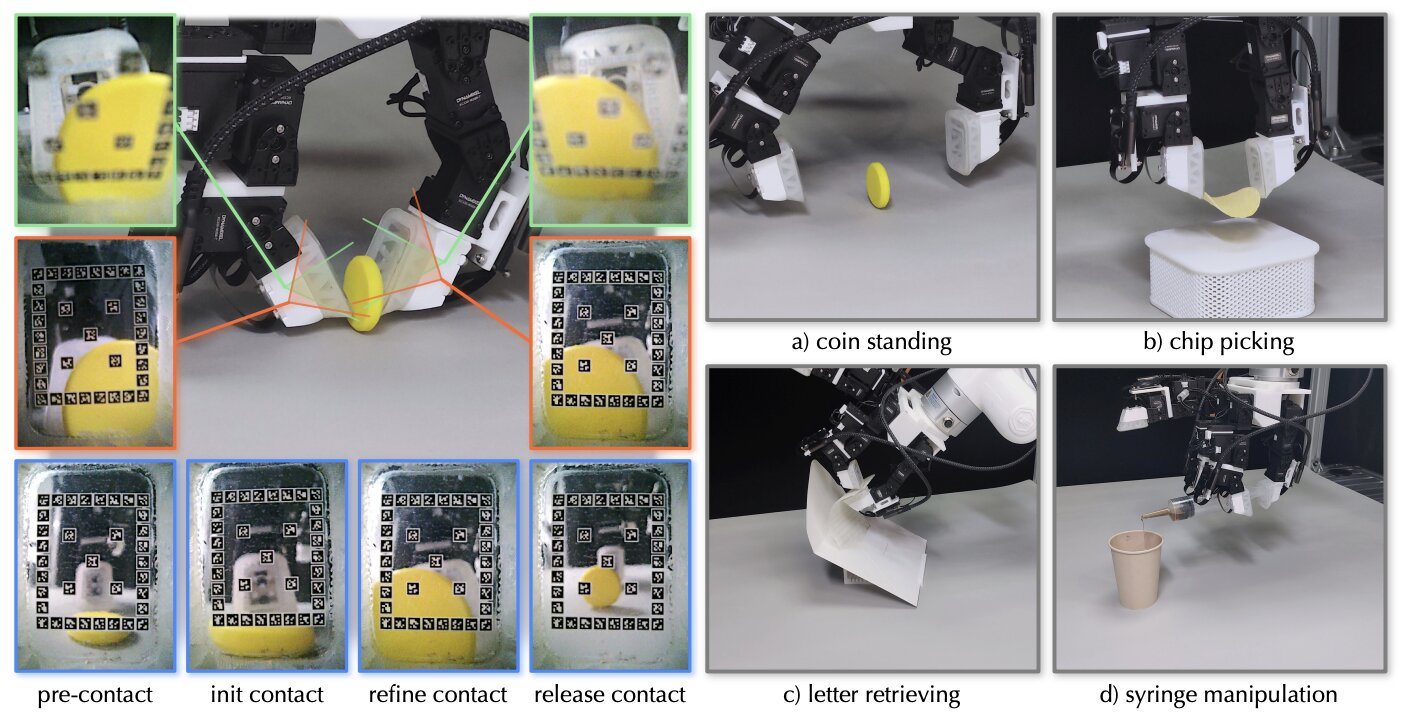

Edge inference is the processing of sensor data and AI model execution directly on the device, without round-tripping to cloud servers. For a robot like LG’s CLOiD—which features dual arms with seven degrees of freedom and five individually-actuated fingers per hand—any inference latency above a few milliseconds could mean the difference between gently lifting a wine glass and shattering it on the countertop. That makes local compute non-negotiable for safe consumer operation.

When an articulated robot reaches for an object, the system must process real-time visual data, query local vector databases to identify the object’s properties, and calculate the exact grip force required—all within a single continuous motion. The inference pipeline is unforgiving: a miscalculation in force or trajectory translates directly into physical damage in someone’s living room. Cloud-dependent architectures simply cannot guarantee the deterministic, sub-10-millisecond response times that dexterous manipulation demands.

The LG-NVIDIA discussions center on compressing this inference stack onto edge hardware. NVIDIA’s Isaac robotics platform and Jetson edge AI modules are built to run perception, planning, and control models locally, drastically cutting the cloud compute costs associated with continuous spatial mapping and video ingestion. For LG, which currently lacks in-house digital twin infrastructure and pre-trained manipulation libraries, adopting this proven pipeline could compress the timeline from prototype to mass production significantly. The economic calculus is straightforward: offloading inference to the cloud at scale would generate unsustainable per-robot operational costs, while edge processing keeps the recurring cost per device manageable for a consumer price point.

The challenge intensifies when considering the sheer variability of home environments. A factory robot operates in a highly structured space with known lighting and fixed obstacles; a home robot faces children’s toys under the sofa, sudden changes in ambient light, and family members walking through the task space unpredictably. Edge inference models must generalize across this chaos without the safety net of a human operator standing by with an emergency stop button.

Why are data center cooling solutions critical for physical AI?

Training the large foundation models that underpin physical AI requires compute clusters of staggering density—and those clusters generate heat that conventional cooling infrastructure was never designed to handle. As NVIDIA’s data center business scales to meet AI demand, high-density server racks are pushing air cooling past its safe operating limits. When temperatures exceed thresholds, compute nodes throttle performance, destroying the return on investment for silicon that costs tens of thousands of dollars per unit.

LG’s commercial HVAC division has positioned itself as a thermal management supplier engineered specifically for AI data centers. At the latest consumer electronics showcase, the company presented high-efficiency cooling solutions that integrate directly into NVIDIA’s infrastructure ecosystem. This isn’t merely a facilities upgrade—it’s a margin-protection play. By replacing traditional air cooling with advanced liquid and refrigerant-based thermal management, operators can pack 30-40% more processing power into the same square footage without risking hardware burnout. For training runs that consume megawatt-hours over weeks, the energy savings alone justify the infrastructure investment.

The partnership logic is symbiotic: NVIDIA gets a thermal solution that keeps its GPU clusters running at full clock speed, and LG secures recurring enterprise revenue as a critical infrastructure supplier to the AI buildout. Rather than competing at the compute layer—a battle it cannot win—LG positions itself one layer below, selling the environmental control that makes that compute layer viable. This same thermal expertise also flows into the edge devices themselves: effective heat dissipation in compact consumer robots directly impacts how much inference compute can be packed into a living-room-safe form factor.

What role do digital twins play in training consumer robots?

Digital twins—high-fidelity virtual replicas of physical environments—allow robotic systems to be trained on simulated variability before ever touching real hardware. For home robots, this means learning to navigate unpredictable living rooms, adapt to changing lighting conditions, and respond to human interference without the trial-and-error that would cause property damage or safety incidents during physical training.

NVIDIA’s Omniverse platform provides the simulation backbone, while the Isaac robotics stack delivers pre-trained manipulation models that can be fine-tuned for specific embodiments. LG currently lacks both the digital twin infrastructure and the simulation environments to compress its deployment pipeline securely. Building these from scratch would require years of development and a talent pool that is fiercely contested. Accessing NVIDIA’s mature stack changes the trajectory: LG can train CLOiD on thousands of virtual kitchens and living rooms simultaneously, testing edge-case object interactions at a scale that physical prototyping could never match.

The consumer home presents dramatically greater training complexity than industrial settings. NVIDIA recently validated its robotics stack in a structured factory trial where a humanoid robot executed logistics operations over an eight-hour shift. That factory floor, while demanding, offers controlled lighting, predictable layouts, and trained personnel who understand robot safety protocols. A consumer living room with children, pets, mirrors, and random furniture arrangements is an entirely different challenge. Access to LG’s ThinQ ecosystem—spanning millions of connected homes—provides NVIDIA with the real-world data diversity needed to train models that generalize beyond sterile simulations. This move from industrial to consumer environments positions Omniverse as a universal development platform for embodied AI, mirroring how NVIDIA’s GPU architecture captured cloud computing a decade ago.

What This Means for Robotics Buyers

The LG-NVIDIA convergence signals that consumer-grade dexterous robots are transitioning from research curiosities to commercial product roadmaps, but the underlying infrastructure requirements reveal why mainstream availability remains distant. Buyers evaluating robots today should calibrate expectations around edge inference maturity and safety certification timelines.

For commercial and industrial buyers, the same digital twin and simulation infrastructure that trains home robots also accelerates deployment of warehouse automation, cobots, and inspection drones. The simulation-to-reality gap narrowing for home environments translates into faster commissioning and lower integration costs for factory and logistics applications. Those exploring humanoid robot platforms can browse humanoid robots on Botmarket to compare currently available models, while operations teams considering collaborative automation can evaluate used cobots for sale for immediate deployment.

| Capability | Industrial Robots (Current) | Home Robots (Near-Future) |

|---|---|---|

| Environment structure | Highly structured, predictable | Unstructured, constantly changing |

| Inference latency requirement | 10-50ms acceptable | Sub-10ms required for dexterity |

| Primary compute location | Often on-premise edge servers | On-device edge processor |

| Safety certification path | Established (ISO 10218, ISO/TS 15066) | Emerging, no unified standard |

| Training data diversity | Task-specific, limited variability | Requires millions of diverse home scenes |

| Cooling and thermal budget | Managed at rack or facility level | Constrained by consumer device form factor |

The table highlights why the inference and cooling challenges under discussion between LG and NVIDIA are not mere engineering details—they represent the critical path to making dexterous home robots affordable, safe, and commercially scalable. Organizations investing in robotics should monitor the edge inference solutions emerging from these partnerships, as they will directly influence the cost curves and capabilities of future automation platforms.

Frequently Asked Questions

Physical AI refers to artificial intelligence systems that interact with and manipulate the real world through robotic embodiments, as opposed to purely digital AI like chatbots or recommendation engines. It combines perception, planning, and physical actuation, requiring models to understand physics, spatial relationships, and tactile feedback.

What is LG’s CLOiD robot?

CLOiD is LG’s home robot concept featuring dual arms with seven degrees of freedom each and hands with five individually-actuated fingers, running on the company’s “Affectionate Intelligence” platform designed for contextual awareness and continuous learning from home environments.

How does NVIDIA’s Omniverse and Isaac stack help with robotics?

NVIDIA Omniverse provides digital twin simulation environments for training robots on virtual scenarios, while Isaac offers pre-trained models for perception, manipulation, and navigation. Together, they let developers train and validate robotic behaviors in simulation at scale before deploying to physical hardware, reducing development time and cost.

When will LG’s home robot be available?

LG has not announced a commercial release date for CLOiD. The ongoing discussions with NVIDIA suggest the company is still assembling the software and edge inference infrastructure needed to make the product safe and economically viable at consumer price points.

What edge inference challenges does CLOiD face?

The primary challenges are achieving deterministic sub-10-millisecond inference latency for real-time manipulation, running complex visual and force-control models on power-constrained consumer hardware, and handling the unpredictable variability of home environments without a remote operator as a safety fallback.

Why is cooling technology relevant to home robots?

The same thermal management expertise that cools AI data centers also determines how much processing power can be packed into a compact home robot without overheating. Effective heat dissipation directly impacts the robot’s inference capability, battery life, and safe operating temperature in a home setting.

Are you ready to trust a dexterous robot with an edge processor inside your living room?

The LG-NVIDIA talks mark a significant milestone in the physical AI convergence, exposing the inference, simulation, and thermal management hurdles that stand between today’s prototypes and tomorrow’s reliable home assistants. The path forward runs through edge compute and digital twins, with implications that extend far beyond a single consumer product.

Tartışmaya katıl

Will dexterous home robots need dedicated edge processors to ever be safe enough for consumers?