Last updated: April 2026

Ouster has launched the Stereolabs ZED X Nano, a compact wrist-mounted stereo camera targeting robotic manipulation, imitation learning, and high-throughput training data collection. The camera delivers 1920×1200 global shutter RGB and depth at up to 120fps over an industrial GMSL2 connection — a significant hardware step up from the USB-based 720p cameras that currently bottleneck most manipulation pipelines.

What is the ZED X Nano and what problem does it solve?

The ZED X Nano is a miniaturised stereo camera built specifically for end-of-arm placement on robotic manipulators — addressing a well-known pain point in manipulation research and deployment. Most wrist-mounted cameras today rely on USB-C connectivity, are limited to 720p resolution, and push frames through CPU-mediated pipelines that introduce latency precisely where manipulation tasks demand the tightest control loops.

Ouster's answer is a camera that is 40% shorter in height than comparable solutions, mounts directly onto wrists and end-of-arm tooling, and inherits the same 1920×1200 global shutter sensor architecture from the flagship ZED X line. The minimal depth-sensing range reaches 3 cm — closer than most competing stereo cameras — which matters directly for near-field grasping and fine assembly work.

According to The Robot Report, Ouster CEO Angus Pacala framed the release explicitly around Physical AI: "The future of Physical AI depends on massive amounts of high-quality, low-latency image data collected at the edge."

ZED X Nano technical specifications

| Specification | ZED X Nano |

|---|---|

| Image resolution | 1920×1200 per eye |

| Max frame rate | 120fps |

| Depth range (min) | 3 cm |

| Depth accuracy (Z-axis) | Sub-millimeter |

| Sensor type | Global shutter |

| Connectivity | GMSL2 (up to 15m cable run) |

| IMU | Onboard, vibration-proof |

| EMI resistance | Yes (locking connectors) |

| Simulation integration | NVIDIA Isaac Sim / Isaac Lab |

| ROS support | ROS and ROS 2 native |

| GPU pipeline | Zero-copy, direct to NVIDIA hardware encoder |

| Form factor | 40% shorter than comparable wrist cameras |

The GMSL2 connection (Gigabit Multimedia Serial Link 2, an automotive-grade serial interface commonly used in ADAS systems) replaces fragile USB-C with an industrial-grade link designed for repeated cable flex and EMI-heavy factory environments. Video runs cleanly up to 15 meters — relevant for manipulators with long cable management runs or ceiling-mounted compute nodes.

How does ZED X Nano compare to Intel RealSense and Luxonis OAK?

For robotics teams evaluating wrist-mounted depth cameras, the ZED X Nano sits in a meaningfully different hardware tier than the two most common alternatives. Here is how the platforms compare across the dimensions that matter most for manipulation pipelines.

| Feature | ZED X Nano | Intel RealSense D405 | Luxonis OAK-D |

|---|---|---|---|

| RGB resolution | 1920×1200 | 1280×720 | 4056×3040 (stills) / 1080p video |

| Max depth frame rate | 120fps | 90fps | 60fps |

| Min depth range | ~3 cm | ~7 cm | ~20 cm |

| Depth technology | Neural stereo (AI) | Active stereo IR | Passive stereo + optional IR |

| Connectivity | GMSL2 (industrial) | USB-C | USB-C |

| Cable run length | Up to 15m | ~5m practical | ~5m practical |

| Onboard AI inference | Via host (NVIDIA Isaac) | Limited onboard | Yes (Myriad X VPU onboard) |

| ROS 2 support | Native | Native | Native |

| Simulation integration | NVIDIA Isaac Sim/Lab | Limited | Limited |

| Target use case | Manipulation, imitation learning | Close-range industrial inspection | Edge AI, mobile robotics |

| Availability | Pre-order, ships May 2026 | Available now | Available now |

The Intel RealSense D405 is the closest direct competitor for close-range manipulation — it was specifically designed for robot arms — but its 7 cm minimum depth range and 720p RGB capture are real constraints when training high-fidelity imitation learning datasets. The Luxonis OAK-D offers onboard Myriad X inference, which is a genuine advantage for edge-compute-constrained deployments, but its 20 cm minimum depth range effectively rules it out for the near-field grasping tasks ZED X Nano targets.

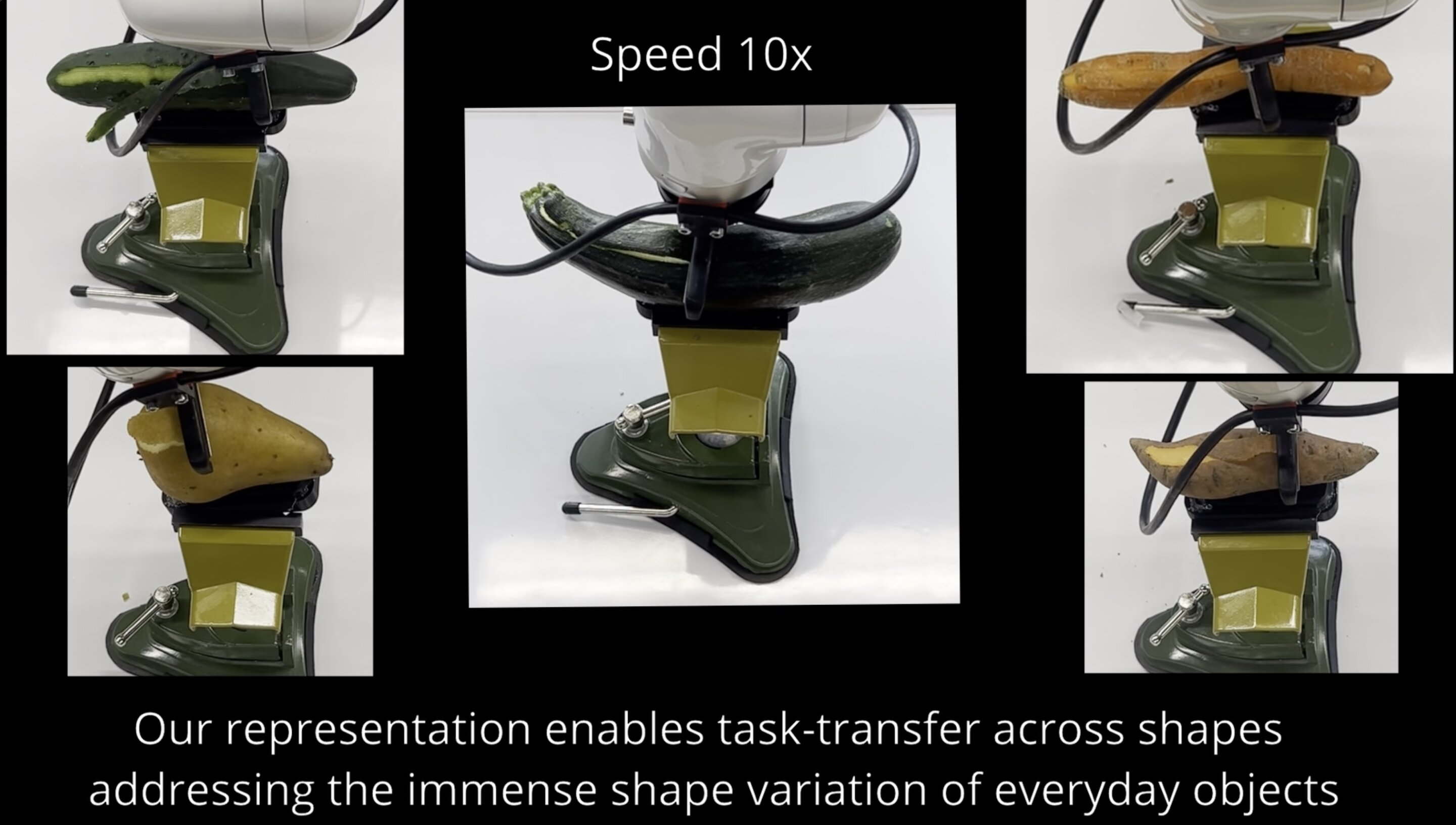

The ZED X Nano's neural depth engine (Stereolabs' AI stereo depth system) produces sub-millimeter Z-axis accuracy and reportedly superior lateral XY positioning versus structured-light or time-of-flight approaches — which matters for grasp pose estimation where even 2-3mm lateral error can cause manipulation failure at scale.

Why the zero-copy GPU pipeline matters for Physical AI

This is the architectural detail most hardware comparisons skim over — and it may be the ZED X Nano's most consequential advantage for teams training manipulation policies at scale.

Traditional USB camera pipelines route frames through the CPU before they reach the GPU: sensor → USB controller → system RAM → CPU processing → GPU memory. Each hop adds latency and consumes CPU cycles. At 120fps and 1920×1200 resolution per eye, that pipeline becomes a genuine throughput bottleneck.

The ZED X Nano implements a fully zero-copy path from sensor to GPU, with frames flowing directly into NVIDIA hardware encoders and AI inference pipelines simultaneously. For data collection teams, this means capturing full-resolution demonstration datasets without frame drops under concurrent workloads. For deployment teams running live manipulation, it means perception networks, segmentation models, and manipulation policy networks can run in parallel on the same incoming frames with significantly more GPU headroom remaining.

The native integration with NVIDIA Isaac Sim and Isaac Lab extends this advantage into the sim-to-real loop. Teams can capture demonstrations on the physical camera, train in simulation using a matched ZED X Nano camera model, and deploy back to hardware — all without swapping perception stacks or recalibrating between environments. For reinforcement learning and imitation learning workflows, this continuity across the sim-to-real boundary is non-trivial.

What This Means for Robotics Teams

The ZED X Nano is not an incremental update to wrist-mounted vision — it is a hardware tier change. Teams currently bottlenecked by USB-based 720p cameras in their manipulation pipelines have a clear upgrade path. The GMSL2 connection and ruggedised cable design also address a real operational cost that doesn't appear in spec sheets: USB-C cables on robot arms fail regularly under repeated flex, and replacing vision hardware mid-deployment is expensive.

The 3 cm minimum depth range is practically significant. Most manipulation research involves objects at distances where competing stereo cameras either have no depth data or degraded accuracy. For assembly, kitting, and bin-picking tasks, this represents genuine new capability rather than incremental improvement.

The pre-order window opens now, with shipping beginning May 2026. Teams building imitation learning datasets or deploying dexterous manipulation systems in 2026 should evaluate this against their current hardware — particularly if they are already running NVIDIA Isaac infrastructure.

For teams building or expanding manipulation-capable systems, browse industrial robots and automation hardware on Botmarket to compare platforms that pair with wrist-mounted vision systems like the ZED X Nano.

Frequently Asked Questions

The ZED X Nano is a wrist-mounted stereo camera developed by Stereolabs (an Ouster subsidiary) for robotic manipulation, imitation learning, and edge AI data collection. It captures 1920×1200 RGB and depth at up to 120fps, with a minimum depth range of 3 cm and a zero-copy GPU pipeline via GMSL2 industrial connectivity. It is 40% shorter in height than comparable wrist-mounted camera solutions.

How does ZED X Nano depth accuracy compare to structured-light cameras?

The ZED X Nano uses Stereolabs' Neural Depth Engine, an AI-powered stereo depth system delivering sub-millimeter Z-axis accuracy. Ouster states it provides superior lateral (XY) positioning compared to traditional structured-light or time-of-flight cameras — a critical advantage for grasp pose estimation where lateral errors of 2-3mm can cause consistent manipulation failures in fine assembly tasks.

What connectivity does the ZED X Nano use and why does it matter?

The camera uses GMSL2 (Gigabit Multimedia Serial Link 2), an automotive-grade serial interface that replaces USB-C. GMSL2 supports cable runs up to 15 meters, provides EMI resistance, and uses locking connectors rated for the repeated flex and vibration stress of robotic arm motion. This eliminates a common failure point in deployed manipulation systems where USB cables degrade under continuous movement.

Does the ZED X Nano work with ROS and NVIDIA Isaac?

Yes. The ZED X Nano offers native ROS and ROS 2 support, and first-class integration with NVIDIA Isaac Sim and Isaac Lab for sim-to-real transfer. Teams can train with a matched ZED X Nano camera model in simulation and deploy to the identical physical sensor stack without recalibrating perception pipelines.

When does the ZED X Nano ship and how can I order it?

The ZED X Nano is available for pre-order now, with shipping beginning May 2026. Orders can be placed through Ouster and the Stereolabs product channel.

Is the ZED X Nano suitable for imitation learning data collection?

Yes — this is one of its primary design targets. The 120fps capture rate at full 1920×1200 resolution, zero-copy GPU pipeline, and native Isaac Lab integration are specifically architected for high-throughput demonstration recording at the frame rates and resolutions that modern imitation learning methods require.

The ZED X Nano represents a purposeful response to a genuine hardware bottleneck that has constrained manipulation research and deployment for years. Whether the Neural Depth Engine's XY accuracy claims hold up across diverse workpieces and lighting conditions will determine how quickly it displaces entrenched USB camera solutions — but the specification case is compelling on paper.

Is USB-based vision hardware the real bottleneck in your manipulation pipeline — or is the limiting factor elsewhere in the stack?

Присоединяйтесь к обсуждению

Is USB-based vision hardware your manipulation pipeline's real bottleneck, or is the constraint somewhere else in the stack?