Investors poured $6.1 billion into humanoid robots in a single recent year — four times the prior year's total. That capital surge didn't come from better motors or cheaper actuators. It came from a fundamental breakthrough in how robots learn, one that's been quietly building since 2015 and has now made the science-fiction robot a plausible engineering target.

- Why Robot Learning Changed Everything After 2015

- From Rules to Reinforcement: The Simulation Era

- How Foundation Models Gave Robots Common Sense

- The Limits That Still Hold the Industry Back

- What This Means for Robotics Buyers and the Hardware Market

- Frequently Asked Questions

Why Robot Learning Changed Everything After 2015

For most of robotics history, intelligence meant rules — thousands of hand-coded instructions written by engineers to cover every foreseeable situation. A robot arm folding laundry needed explicit logic for sleeve orientation, fabric stiffness, collar detection, and dozens of edge cases. The ruleset exploded in complexity before it ever became reliable.

That approach produced dependable industrial robots for structured environments — welding lines, pick-and-place cells, conveyor systems — but it couldn't generalise. Move the same arm to a different context, change the lighting, introduce a new object shape, and performance collapsed immediately.

The gap between what robots could do and what researchers dreamed they could do remained stubbornly wide. Then, around 2015, the methodology shifted.

According to MIT Technology Review's deep-dive into the contemporary history of robot learning, the pivotal change was moving from rule-encoding to data-driven trial and error — and then, after 2022, to AI foundation models that learned from internet-scale data rather than hand-crafted simulations alone.

From Rules to Reinforcement: The Simulation Era

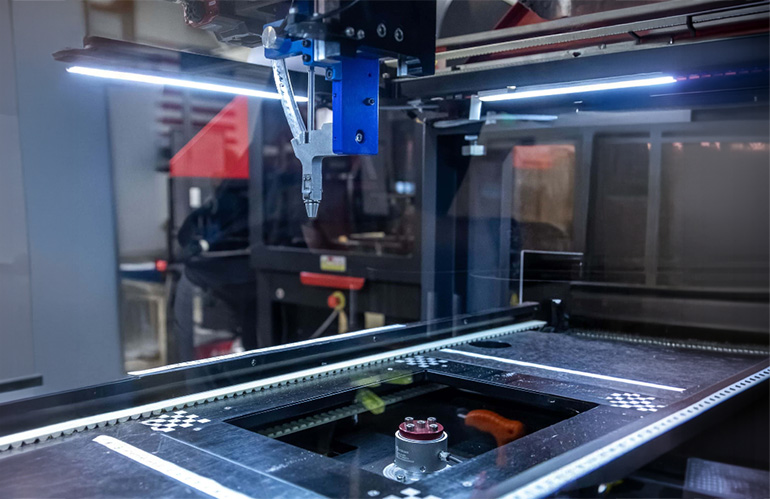

Around 2015, leading robotics labs began replacing hand-written rules with reinforcement learning (RL) — a training method where an AI agent receives reward signals for successful actions and penalty signals for failures, then iterates millions of times to discover its own strategies.

OpenAI's Dactyl project, a five-fingered robotic hand trained entirely in simulation, demonstrated both the power and the core limitation of this approach. Dactyl learned to manipulate small cubes by training inside digital environments — essentially a virtual physics engine — before being deployed on real hardware. The problem: even minor discrepancies between the simulated world and physical reality caused performance to degrade sharply.

The engineering solution was domain randomisation — deliberately introducing random variation across millions of simulated training environments. Friction coefficients, lighting conditions, object colours, and surface textures were all varied randomly so that the trained policy would be robust enough to handle the messiness of the real world. The technique worked well enough that Dactyl eventually solved Rubik's Cubes — though only 60% of the time on standard scrambles, dropping to 20% on harder configurations.

Those numbers matter for understanding where the field stood at the time. Simulation-trained RL produced genuinely impressive dexterity, but reliability was insufficient for commercial deployment. OpenAI shuttered its robotics division in 2021, reflecting the ceiling the technique had hit.

The Simulation-to-Reality Gap: Key Technical Challenges

| Challenge | Description | Mitigation Used |

|---|---|---|

| Visual mismatch | Colours and textures differ from simulation | Domain randomisation |

| Physical properties | Friction, deformation not perfectly modelled | Randomised physics parameters |

| Sensor noise | Real sensors introduce latency and error | Noise injection in training |

| Mechanical wear | Actuators degrade over time | Not solved by sim-to-real alone |

How Foundation Models Gave Robots Common Sense

The arrival of large language models changed robotics more profoundly than any hardware advance of the past decade. The key insight was architectural: LLMs learn by predicting what token (word, sub-word, or character) comes next in a sequence, ingesting massive corpora of text to build rich internal representations of language and world knowledge. Roboticists asked an obvious but transformative question — could the same architecture work if the tokens were sensor readings, camera frames, and joint positions instead of words?

Google DeepMind's answer was RT-1 and its successor RT-2 (Robotic Transformer). RT-1 was trained on 17 months of teleoperation data covering 700 distinct tasks, receiving robot camera views and arm joint states as inputs and generating motor commands as outputs. On tasks it had seen during training, it achieved 97% success. On entirely novel instructions, it still managed 76% — a dramatic improvement over anything simulation-only approaches had achieved.

RT-2 went further by incorporating internet-scale image and text data, giving the robot a form of common sense grounded in the broader visual world rather than just the robotics lab. This is the key conceptual leap: instead of programming robots with rules, or training them purely on robot-specific data, researchers discovered that general world knowledge — the kind baked into vision-language models during web-scale pretraining — transferred surprisingly well to physical manipulation tasks.

The practical implication is significant. A robot that has seen millions of images of kitchens, drawers, and cups during pretraining arrives with contextual understanding that rule-based systems could never acquire. It isn't certain which cup the human wants, but it has a reasonable prior. That prior dramatically reduces the amount of robot-specific training data required to reach useful performance levels.

The Limits That Still Hold the Industry Back

The current excitement is real, but it's worth mapping what remains genuinely unsolved. Foundation models for robotics face a data problem that doesn't exist for language models in the same form. Text data is abundant, cheap, and easily scraped from the web. High-quality robot demonstration data — diverse, physically grounded, and accurately labelled — is expensive to collect, hardware-dependent, and difficult to transfer between robot morphologies.

Early social robots illustrate a different limitation: capability without reliability. Jibo, the MIT-developed home social robot that raised $3.7 million in crowdfunding and retailed at $749, had compelling vision but was ultimately undermined by the pre-LLM language technology of its era. Its conversations relied on scripted response snippets that quickly felt repetitive and shallow. Today's voice AI would transform what Jibo could have been — but the new generation of AI-powered toys introduces the opposite risk. Scripted systems couldn't go off-rails; generative AI systems absolutely can, as documented cases of AI companions giving children dangerous guidance have demonstrated.

The field has traded one set of limitations (rigidity, brittleness) for another (unpredictability, safety uncertainty). Neither problem is fully solved. What has changed is that the trajectory of improvement is now measurably steeper.

What This Means for Robotics Buyers and the Hardware Market

The AI learning revolution isn't just an academic story — it's already reshaping hardware valuations in ways that matter to buyers and operators right now.

Robots whose capabilities were locked to their original programming depreciate quickly in the current market. Second-generation industrial arms with fixed motion programs have declining resale value as buyers increasingly expect adaptability. Meanwhile, hardware platforms designed to run learning-based software — with accessible compute, open APIs, and sufficient sensor payloads — are holding value more robustly.

For buyers evaluating purchases today, several implications stand out:

- Platform extensibility matters as much as current capability. A cobot that runs modern ML inference locally will have a longer useful life than one locked to vendor-specific programming environments.

- Used hardware pricing reflects AI readiness. Robots from platforms that have received major learning-based software updates retain value; those left behind by their manufacturers are discounting significantly.

- Data infrastructure is the new differentiator. Buyers deploying multiple units should plan for teleoperation data collection from day one — that demonstration data becomes the training corpus for improved performance.

For operators considering entry-level deployment, the current used industrial robot market offers access to capable hardware at reduced cost, though buyers should assess software update roadmaps carefully. Similarly, the growing cobot category is particularly well-positioned to benefit from foundation model deployment, given cobots' inherently flexible, human-adjacent operating contexts.

Frequently Asked Questions

The primary driver was the maturation of AI foundation models — specifically, the discovery that vision-language models trained on internet-scale data could be adapted to generate robot motor commands with far greater generalisation than previous rule-based or simulation-only approaches. Investment surged after research demonstrated that models like RT-2 could perform novel tasks without task-specific training, unlocking a credible path to general-purpose robots. Recent figures show investment quadrupling year-over-year, reaching $6.1 billion.

What is domain randomisation in robotics and why does it matter?

Domain randomisation is a simulation training technique where thousands of slightly different virtual environments are generated during training — varying lighting, friction, object colours, and physics parameters randomly. It addresses the sim-to-real gap (the performance degradation when simulation-trained policies run on physical hardware) by forcing the learned policy to be robust across many possible world configurations. OpenAI's Dactyl used this approach to achieve Rubik's Cube solving with a robotic hand, though success rates plateaued at 60% for standard difficulty levels.

How do foundation models for robotics differ from standard LLMs?

Standard large language models process text tokens as both input and output. Robotics foundation models extend this architecture to treat camera frames, depth sensor readings, and robot joint positions as additional input tokens, and motor velocity commands as output tokens. The core prediction task — "what comes next given prior context?" — remains structurally similar. The critical advantage is that pretraining on internet-scale visual and language data gives these models world knowledge and common sense that pure robot demonstration data cannot efficiently provide.

Will AI-adaptive robots make older fixed-program robots obsolete quickly?

Not immediately. Fixed-program industrial robots remain highly cost-effective for high-volume, low-variation tasks like welding and stamping, where adaptability provides no value. Obsolescence pressure is highest in mixed-SKU logistics, light assembly, and service environments where task variability is inherent. Buyers should evaluate whether their specific task profile actually benefits from adaptability before assuming newer AI-capable platforms justify the price premium over proven legacy hardware.

What are the main unsolved problems in robot learning today?

Three challenges remain significant: (1) the high cost and limited availability of diverse robot demonstration data compared to text data for language models; (2) the safety unpredictability of generative AI systems deployed in physical environments, particularly those interacting with vulnerable populations; and (3) reliable dexterous manipulation — fine motor tasks like threading cables or handling deformable materials still defeat most current systems in real-world conditions rather than controlled lab settings.

The robot-learning revolution is real, but it is not finished. Foundation models have shattered the ceiling that rule-based systems imposed, and the investment numbers reflect genuine technological progress rather than pure speculation. The gap between science-fiction robots and deployable hardware has narrowed more in the past three years than in the preceding three decades.

The next constraint isn't algorithmic. It's data, safety validation, and hardware reliability at scale — the hard engineering problems that funding alone cannot accelerate beyond a certain pace.

Which robot learning approach — reinforcement learning, foundation models, or teleoperation data — do you think will determine who wins the humanoid race?

Sumali sa diskusyon

Which robot learning approach — RL, foundation models, or teleoperation data — will determine who wins the humanoid race?