Runway — best known for AI video generation — is quietly assembling a robotics-focused team and fine-tuning its world models for physical AI applications including autonomous vehicles and robotics systems. The move signals a broader industry shift: foundation model companies are increasingly treating embodied AI as their next major revenue frontier, joining NVIDIA, Google DeepMind, and others already racing to own that stack.

- The AI-to-Robotics Pipeline Is Real

- What Runway Actually Brings to Physical AI

- Why Video Generation Models Matter for Robots

- The Competitive Landscape: Who Else Is Targeting Physical AI

- What This Means for Robotics

- Frequently Asked Questions

The AI-to-Robotics Pipeline Is Real

Foundation model companies are no longer content serving creative professionals and enterprise software teams. The robotics and autonomous vehicle markets represent a multi-hundred-billion-dollar deployment opportunity that requires exactly what these companies already build: large-scale world models trained on visual and spatial data. Runway is the latest to formally pursue this direction, according to TechCrunch, by hiring robotics-focused engineers and adapting its existing models for physical applications.

This is not a pivot — it's an extension. Runway's core capability is generating temporally coherent video from learned world representations. That same capability, retooled, becomes a simulation and perception engine for machines that need to understand how the physical world moves and changes. The overlap is larger than it first appears.

The pattern is now well-established across the industry. NVIDIA built Isaac Sim and physical AI tooling directly on top of its GPU and simulation infrastructure. Google DeepMind spun up robotics research divisions and published RT-2, demonstrating that vision-language models transfer meaningfully to robot control. Physical AI startup Figure AI licensed OpenAI models to power humanoid reasoning. The pipeline from foundation model capability to robot deployment is becoming an explicit product strategy — not a research curiosity.

What Runway Actually Brings to Physical AI

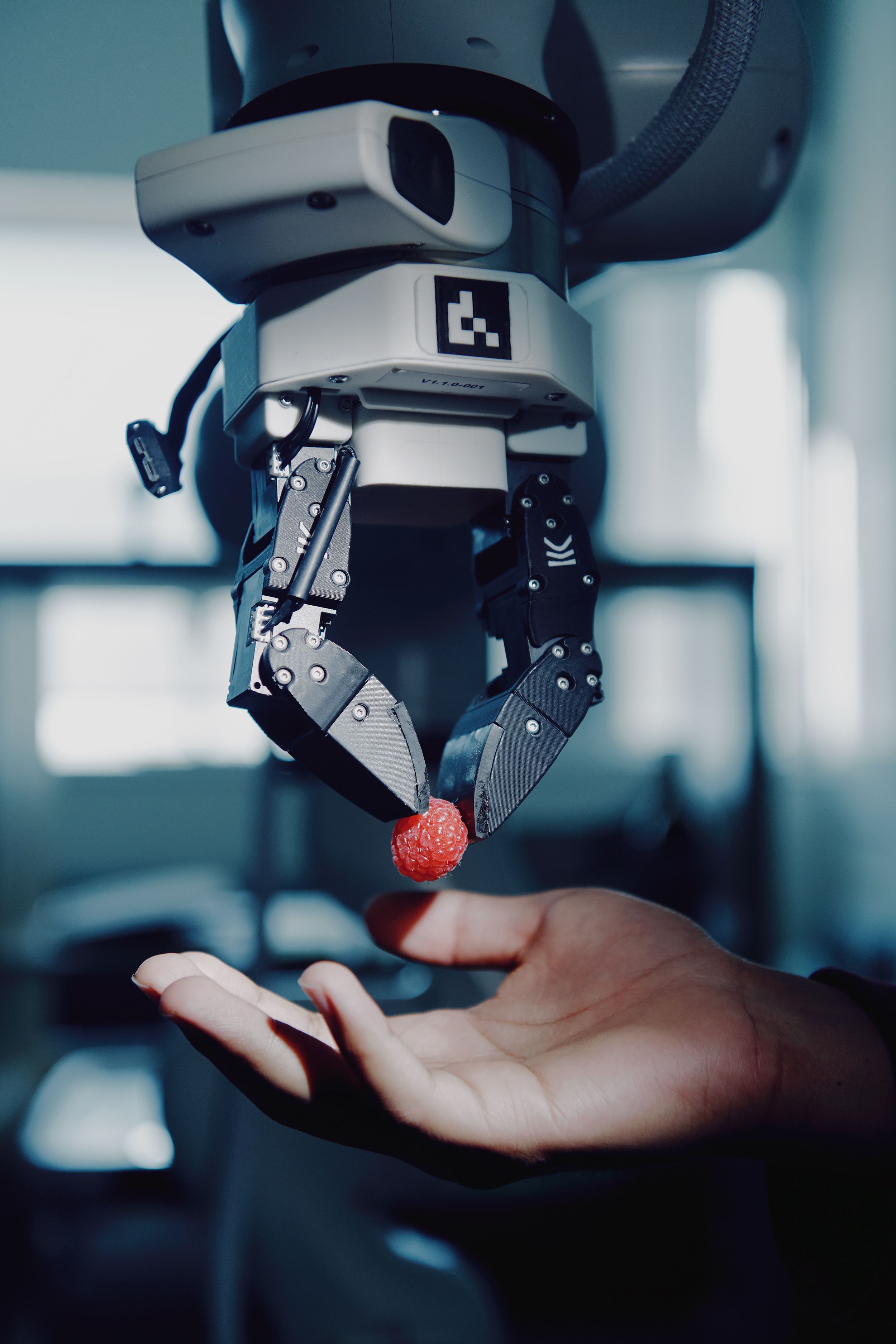

Runway's value proposition in robotics rests on its world modeling capability — its models don't just generate images, they model how scenes evolve over time. For robotics, this matters enormously. Robots operating in unstructured environments need to predict what happens next: how an object will move when pushed, how a human will step around an obstacle, whether a surface will hold weight.

The company is reported to be fine-tuning its existing models for robotics and self-driving customers specifically. That fine-tuning process is significant. Pre-trained world models reduce the data burden on robotics teams, who historically have needed enormous amounts of domain-specific training data to achieve reliable real-world performance. If Runway's models can provide a strong visual-spatial prior that robotics engineers then specialise, the development cycle shortens considerably.

The self-driving angle is equally strategic. Autonomous vehicle companies have long used synthetic data and simulation to augment real-world training datasets — it's one of the core bottlenecks in AV development. A world model that generates photorealistic, physically plausible driving scenarios at scale has direct commercial value to any AV lab still working on edge-case coverage.

Why Video Generation Models Matter for Robots

The connection between video AI and robotics is more technical than it might appear. Consider what a video generation model actually learns: it internalises a compressed representation of how the visual world behaves — lighting, physics, object permanence, motion dynamics. These are precisely the properties that make robots competent in unstructured environments.

Here's where the analogy holds and where it breaks down. Video models learn a statistical model of the world from passive observation. Robots need causal models — understanding not just what typically happens, but what will happen given a specific action the robot takes. Runway's models, trained on passive video, will require significant adaptation to support action-conditioned prediction. That's the hard part, and it's where dedicated robotics fine-tuning becomes essential rather than optional.

The teams reportedly being hired at Runway presumably include engineers who understand this gap and are working to close it. Whether Runway's architecture can bridge that gap as efficiently as purpose-built systems like NVIDIA's Isaac or 1X Technologies' proprietary models remains to be seen.

Key Capability Comparison

| Capability | Runway (current) | Robotics requirement | Gap |

|---|---|---|---|

| Visual world modeling | Strong | Required | Small |

| Temporal coherence | Strong | Required | Small |

| Action-conditioned prediction | Limited | Critical | Large |

| Sim-to-real transfer | Unproven | Critical | Unknown |

| Physical plausibility | Moderate | High | Moderate |

The Competitive Landscape: Who Else Is Targeting Physical AI

Runway is entering a field that is already crowded with well-capitalised players. Understanding the competitive dynamics helps clarify whether this is a genuine opportunity or a speculative bet.

NVIDIA has arguably the strongest integrated position: Isaac Sim for synthetic data generation, CUDA for model training, Jetson for edge inference, and now the Thor compute platform targeting automotive and robotics. It owns the hardware and increasingly the software stack.

Google DeepMind brings the deepest robotics research bench, with work spanning imitation learning, reinforcement learning, and vision-language-action (VLA) models. RT-2 and subsequent models demonstrated that internet-scale pre-training meaningfully transfers to manipulation tasks.

Physical AI startups — including 1X Technologies, Physical Intelligence (π), and Covariant — are building robotics-native foundation models from the ground up, optimised specifically for action and control rather than adapted from generative video.

Sora (OpenAI) represents the closest analogue to Runway's position: a video world model company with the stated ambition of building physical world simulators. OpenAI has already moved into robotics partnerships with Figure AI.

What Runway offers that some of these players lack is a commercialised model API and an existing base of enterprise customers. The robotics market is hungry for accessible, fine-tunable foundation models rather than vertically integrated black boxes. If Runway can position itself as the "robotics fine-tuning layer," it occupies a defensible niche even without building full-stack robotics infrastructure.

What This Means for Robotics

The entry of foundation model companies into physical AI has concrete downstream effects for robotics buyers, developers, and integrators.

For robotics developers: access to pre-trained world models via API could significantly reduce the time and data required to train perception and prediction systems. Rather than collecting thousands of hours of domain footage, teams may be able to fine-tune a Runway-style model on hundreds of hours. This is the same efficiency gain that large language models delivered to software developers — but applied to physical systems.

For industrial buyers: this trend accelerates the timeline on capable, general-purpose robots. The bottleneck for deploying used industrial robots has often been the perception and planning software, not the mechanical hardware. As foundation model companies compete to serve robotics customers, that software layer becomes cheaper, more capable, and more accessible.

For the broader market: the convergence of generative AI companies and physical AI represents a structural shift in how robots get built. Robots increasingly won't be programmed from scratch — they'll be instantiated from pre-trained world models and adapted to specific deployment contexts. That changes the economics of robot development dramatically.

If you're evaluating the current generation of AI-enabled robots for warehouse, logistics, or manufacturing use cases, browse humanoid robots on Botmarket to see what's already commercially available as this software layer matures underneath them.

Frequently Asked Questions

Runway is building a dedicated robotics team and fine-tuning its existing AI video and world models for robotics and autonomous vehicle customers. The company is adapting its generative AI capabilities — specifically its ability to model how visual scenes evolve over time — for physical AI applications that require understanding of real-world dynamics.

How do video generation models help robots?

Video generation models learn compressed representations of how the physical world behaves, including motion dynamics, object interactions, and spatial relationships. These representations can serve as pre-trained priors for robot perception and prediction systems, potentially reducing the amount of domain-specific training data robotics teams need to collect. The key limitation is that passive video models must be further adapted to support action-conditioned prediction — understanding what happens when a robot takes a specific action.

Who are Runway's main competitors in physical AI?

Runway's primary competitors for physical AI model infrastructure include NVIDIA (Isaac Sim, Thor platform), Google DeepMind (RT-2 and successor VLA models), OpenAI (Sora world model, Figure AI partnership), and robotics-native foundation model startups such as Physical Intelligence (π) and Covariant. Each brings different architectural strengths; Runway's differentiator would be its existing API infrastructure and enterprise customer base.

Will this make robots cheaper or easier to deploy?

Increased competition among foundation model providers targeting robotics tends to compress costs and improve accessibility over time. If Runway and competitors succeed in offering fine-tunable world models via API, robotics developers could reduce perception and planning development costs substantially. However, significant technical gaps — particularly around action-conditioned prediction and sim-to-real transfer — must be closed before these models deliver production-grade reliability in unstructured environments.

What types of robots would benefit most from Runway's models?

Autonomous vehicles and mobile manipulation robots stand to benefit most in the near term, as both require strong visual-spatial prediction capabilities in dynamic environments. Industrial fixed-arm robots operating in highly structured settings have less need for general world modeling. The commercial sweet spot for Runway-style models is likely in logistics, inspection, and service robotics where environmental variability is high.

Runway's move into robotics is the latest confirmation that the boundary between generative AI and physical AI is dissolving. The companies that own the world modeling layer may ultimately shape how the next generation of robots perceives and navigates reality.

Which foundation model company do you think is best positioned to own the physical AI stack — and does Runway have a realistic shot?

Zapojte se do diskuse

Which foundation model company is best positioned to own the physical AI stack — does Runway have a realistic shot?