A new sensor called FingerEye integrates tactile feedback and visual perception into a single fingertip-mounted unit, giving robots the ability to assess objects both before and after making contact. Developed by researchers targeting the persistent gap in dexterous manipulation, the system could accelerate progress in humanoid robotics and warehouse automation — two domains where unreliable grasping remains a costly bottleneck.

- What Is the FingerEye Sensor and How Does It Work?

- Why Dexterous Manipulation Is Still an Unsolved Problem

- How FingerEye Combines Pre-Contact and Post-Contact Sensing

- What This Means for Robotics and Automation

- Frequently Asked Questions

What Is the FingerEye Sensor and How Does It Work?

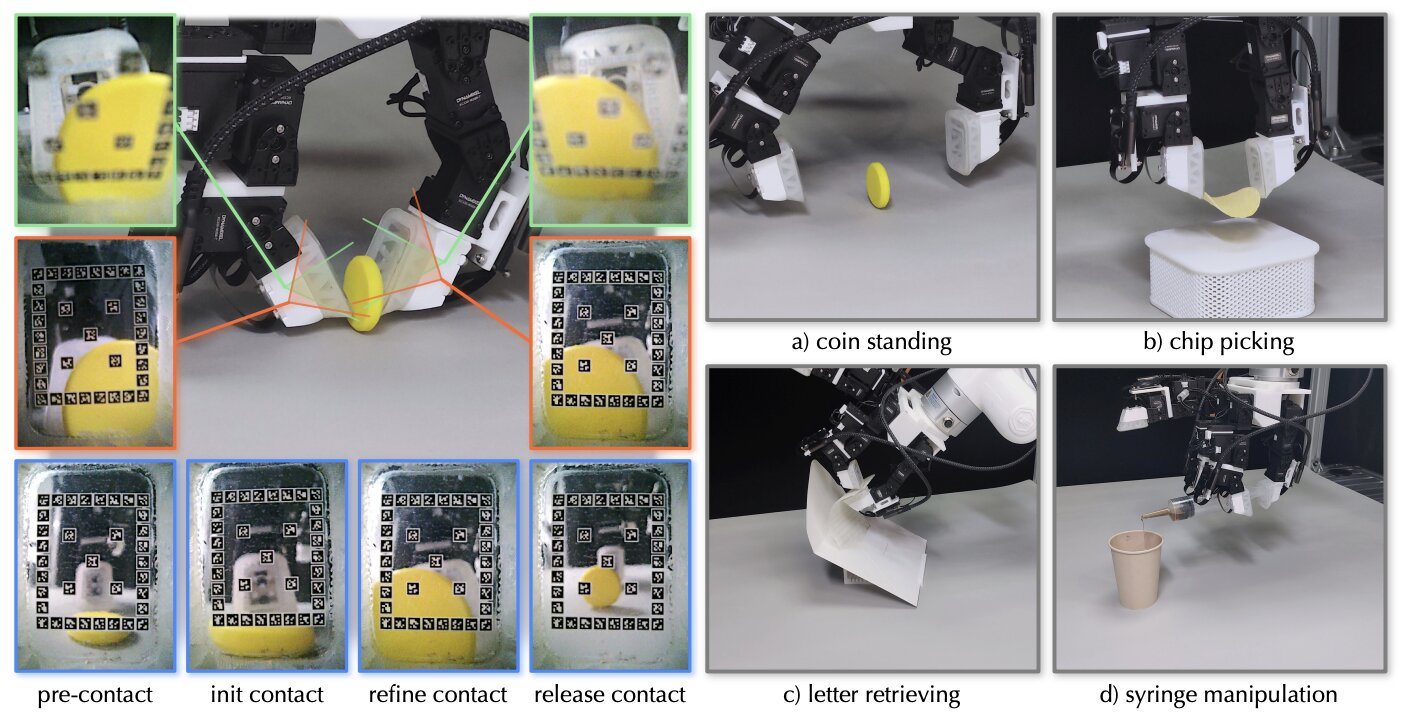

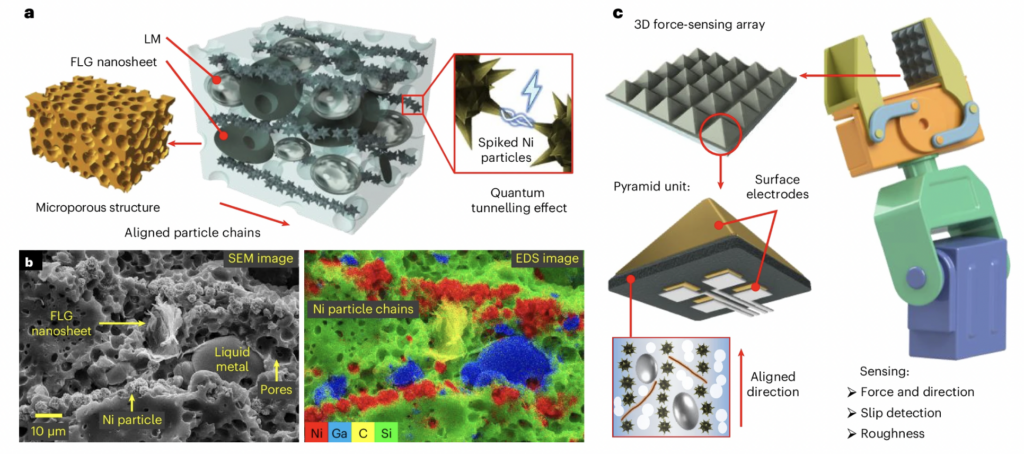

FingerEye is a compact, fingertip-mounted sensor that merges a vision module with a tactile sensing surface, allowing a robot to gather object information during approach and refine its grip model the moment contact is made. The key insight is that a single sensor handles both sensing phases — eliminating the coordination overhead between separate camera and tactile subsystems that has complicated previous designs.

The sensor embeds a small camera behind a compliant (deformable) gel layer. Before contact, the camera functions as a close-range visual sensor, capturing geometry and surface texture as the finger moves toward a target object. The moment the gel makes contact, it deforms, and the same camera reads the deformation pattern — a well-established principle known as visuotactile sensing (using camera-based imaging to infer force and contact geometry from gel deformation).

What distinguishes FingerEye from earlier visuotactile sensors such as GelSight or DIGIT is the deliberate architectural decision to serve both modes from one optical path. Earlier designs optimised primarily for post-contact tactile readout, treating pre-contact vision as a secondary concern or offloading it to separate wrist-mounted cameras entirely. FingerEye treats both regimes as first-class sensing targets.

Technical architecture at a glance

| Feature | FingerEye | Typical visuotactile sensor |

|---|---|---|

| Pre-contact visual sensing | Yes — integrated | Typically absent or external |

| Post-contact tactile sensing | Yes — gel deformation | Yes |

| Sensor count per finger | 1 | 1-2 (often supplemented) |

| Sensing continuity across contact | Continuous | Discontinuous — mode switch |

| Target application | Dexterous manipulation | Mostly grasp quality estimation |

Why Dexterous Manipulation Is Still an Unsolved Problem

Pick-and-place — moving an object from point A to point B — is largely solved at an industrial scale. The hard problem is what comes after: repositioning, reorienting, tool use, in-hand manipulation. These tasks require a robot to maintain a continuously updated model of how an object sits in its grip, and to adjust that model in real time as the object moves.

Most current robotic systems fail here for a predictable structural reason. Their sensing pipeline has a perceptual discontinuity at contact: cameras see the world clearly until the finger occludes the object, and tactile sensors only activate once contact is already established. The transition between these two sensing regimes produces a window of uncertainty — the robot commits to a grasp trajectory based on visual data, then waits for tactile confirmation, and has limited ability to course-correct mid-approach.

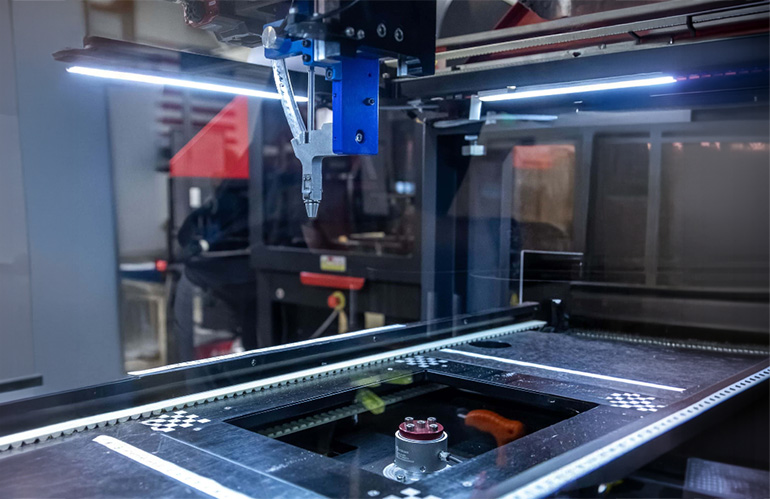

This matters enormously for warehouse automation. A robotic picking system handling irregular or deformable items — soft packaging, produce, irregular hardware parts — needs to anticipate how an object will respond before it touches it, then verify and adapt in real time. Grasping failures in high-throughput environments don't just waste cycle time; they trigger downstream faults, require human intervention, and reduce the effective throughput of the whole cell.

For humanoid robots, the stakes are even higher. A humanoid operating in an unstructured environment — a home, a hospital, a workshop — encounters object variety that cannot be pre-programmed. The robot must generalise, and generalisation requires rich, continuous sensory data across the entire manipulation sequence.

How FingerEye Combines Pre-Contact and Post-Contact Sensing

The continuity FingerEye achieves across the contact boundary is the core technical contribution. As the finger approaches an object, the camera captures surface geometry and texture at close range — feeding pose estimation and grasp planning algorithms with object-specific data rather than relying solely on scene-level camera feeds. This pre-contact visual data allows the robot to refine its grip strategy in the final centimetres of approach, a phase most systems treat as a dead zone.

At the moment of contact, the gel deforms, and the optical pattern shifts from external-world imaging to gel-deformation imaging. The same underlying camera hardware now reads contact geometry: which parts of the fingertip are loaded, how the load is distributed, whether the contact is stable or sliding. This transition happens without switching sensor modalities, data formats, or processing pipelines — the continuity is structural, not just logical.

The practical benefit is a tighter sensorimotor loop (the cycle from sensing to motor command). A robot using FingerEye can, in principle, begin adjusting grip parameters while still approaching an object, rather than committing fully and then reacting once contact is established. This shifts manipulation from reactive to predictive — a meaningful capability upgrade for tasks involving fragile, irregular, or dynamically moving objects.

Researchers report that the design also reduces the mechanical complexity of instrumented robot hands. Eliminating the need for separate approach cameras and fingertip tactile arrays reduces cabling, calibration burden, and points of failure — all practical concerns when scaling to multi-fingered humanoid hands where space and weight budgets are tight.

What This Means for Robotics and Automation

For robotics developers and buyers, FingerEye represents a direction rather than a shipping product — but the direction is significant. The core problem it addresses, the pre-to-post contact sensing gap, is not niche. It affects every manipulation-heavy application: surgical robotics, logistics, food handling, electronics assembly, and humanoid general-purpose manipulation.

For warehouse and logistics operators, the near-term implication is continued pressure on robot vendors to close the sensing gap in picking systems. Solutions like FingerEye, if validated at scale, would reduce the dependency on hand-engineered grasp libraries for specific SKUs — a major hidden cost in robotic picking deployments. Buyers evaluating used industrial robots for manipulation tasks should track whether vendor roadmaps include visuotactile upgrades, as this will increasingly separate competitive systems from legacy ones.

For humanoid robotics developers, integrated fingertip sensing is a recognised gap in current-generation platforms. Most humanoids shipping or in late development rely on wrist-mounted cameras and minimal fingertip sensing. FingerEye's architecture — a single sensor covering the full approach-to-manipulation sequence — aligns with what multi-fingered humanoid hands will need to handle unstructured real-world tasks. Those building on or evaluating humanoid robots should watch how quickly sensor designs like this migrate from lab demonstrations to production-ready fingertip modules.

For AI and perception researchers, FingerEye also has implications for training data. A sensor that captures continuous pre- and post-contact data in a unified format makes it significantly easier to collect the kind of rich manipulation datasets that reinforcement learning and imitation learning systems require. Better sensors generate better training data, which generates more capable manipulation policies — a compounding effect.

Frequently Asked Questions

GelSight and similar visuotactile sensors are optimised for post-contact tactile readout — they tell you what happened after the finger touched something. FingerEye extends the same optical sensing principle to the pre-contact phase, giving robots continuous object information during approach as well as after contact. This eliminates the perceptual gap that forces most manipulation systems to commit to a grasp strategy before they have full tactile information.

What types of robots would benefit most from FingerEye-style sensors? Multi-fingered robotic hands performing dexterous manipulation tasks benefit most — this includes humanoid robot hands, surgical robot end-effectors, and advanced picking arms in logistics. Simple parallel-jaw grippers handling uniform objects in structured environments gain less, since their manipulation tasks don't require the continuous in-hand sensing FingerEye provides.

How does pre-contact visual sensing improve grasp success rates? By capturing close-range geometry and surface texture during the final approach, the robot can refine its grasp plan in real time rather than executing a fixed strategy based on scene-level camera data. This is particularly valuable for irregular, deformable, or reflective objects where top-down camera feeds provide insufficient detail for reliable grasp planning.

Is FingerEye a commercial product available for purchase? Based on current reporting, FingerEye is a research prototype. It has not been announced as a commercial product. Commercialisation timelines for academic tactile sensing research typically range from two to five years, depending on whether the researchers pursue licensing, spin-out, or publication-only routes.

What is the biggest remaining challenge for visuotactile sensing in robots? Durability and calibration stability under real-world conditions are the primary engineering challenges. Gel-based visuotactile sensors can degrade with repeated contact cycles, and their calibration can drift as the gel material changes. Achieving the sensing accuracy seen in lab demonstrations over thousands of manipulation cycles in industrial or domestic environments remains an active research problem.

Tactile sensing has long been described as the missing sense in robotics — FingerEye is a credible step toward closing that gap by treating approach and contact as a single continuous sensing problem rather than two separate ones. Whether this specific design reaches production or inspires the next generation of fingertip sensors, the architectural principle it demonstrates is sound and the problem it solves is real.

Which manipulation task do you think benefits most from continuous pre-contact sensing — warehouse picking or humanoid dexterity?

Pridružite se razpravi

Which application benefits more from continuous contact sensing — warehouse picking or humanoid hands?