Touch is the sense holding humanoid robots back — and a new graphene-based tactile sensor from the University of Cambridge may be the most credible fix yet. Published in Nature Materials, the device detects 3D force vectors, surface texture, and object slip in real time, at a spatial resolution that rivals human fingertips. For humanoid platforms like Figure, Apptronik, and Tesla Optimus, that gap has long been the quiet limiter on dexterous manipulation.

- Why Touch Is the Hardest Sense to Engineer Into Robots

- How the Graphene Tactile Sensor Actually Works

- Benchmark Performance: How Does It Compare?

- What This Means for Humanoid Robots and Cobots

- What This Means for Robotics Buyers and Developers

- Frequently Asked Questions

Why Touch Is the Hardest Sense to Engineer Into Robots

Most robotic systems can see with millimetre-level precision and move with sub-millimetre repeatability. Yet the moment they need to handle a raw egg, peel a label, or thread a cable — tasks any human child manages instinctively — they fail. The reason is tactile blindness.

Human fingers carry four distinct mechanoreceptor types (SA1, SA2, FA1, FA2), each tuned to different stimuli: sustained pressure, skin stretch, vibration, and fine texture. Together they generate a continuous, high-bandwidth stream of multidimensional data that the brain uses to modulate grip force in milliseconds. Current robotic grippers have nothing comparable.

Professor Tawfique Hasan, who led the Cambridge research team, puts the problem plainly: "Most existing tactile sensors are either too bulky, too fragile, too complex to manufacture, or unable to accurately distinguish between normal and tangential forces. This has been a major barrier to achieving truly dexterous robotic manipulation."

That limitation is visible in every humanoid robot demo today. Figure 02, Apptronik Apollo, and Tesla Optimus all impress in carefully staged manipulation tasks — but watch closely, and you see the same compensatory strategy: slow, overly cautious grasps, excessive squeeze force applied to avoid drops, and near-zero ability to respond to unexpected slip. The hands are capable. The skin is not.

How the Graphene Tactile Sensor Actually Works

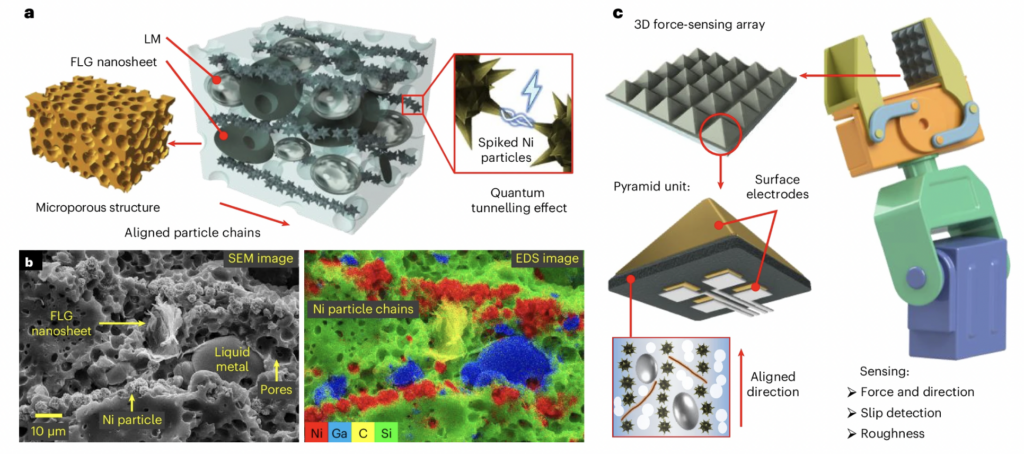

The Cambridge sensor solves this through a combination of materials science and bio-inspired geometry. The core material is a soft composite: graphene sheets, deformable liquid metal microdroplets, and nickel particles, all suspended in a silicone elastomer matrix. When the material deforms under contact, its electrical conductivity changes — and those changes encode force information.

The breakthrough is in the geometry. The composite is moulded into tiny pyramidal microstructures, some as small as 200 micrometres across (roughly twice the diameter of a human hair). This shape is deliberately borrowed from the microarchitecture of human skin, where ridged structures concentrate mechanical stress at localised points. The pyramid tips do the same thing artificially — they amplify stress concentration, making the sensor responsive to extremely low forces while retaining a wide measurement range.

Beneath each pyramid, four electrodes capture independent electrical signals. By comparing the relative magnitude of those four readings, the sensor mathematically reconstructs the full 3D force vector — distinguishing normal force (pressing straight down) from shear forces (lateral sliding) — in real time. This shear detection is what enables slip prediction: the sensor identifies the onset of object movement before the grip actually fails, allowing corrective force to be applied proactively.

At smaller scales, arrays of these sensors can also extract surface texture information and identify object properties — mass, geometry, and material density — from force signal patterns alone, without requiring any prior knowledge of the object.

Benchmark Performance: How Does It Compare?

The Cambridge team's published data in Nature Materials positions the sensor as a significant step beyond the current state of the art. The key claim: the new device improves on existing flexible tactile sensors by roughly an order of magnitude on both minimum detectable force and sensor footprint.

| Metric | Typical Flexible Tactile Sensors | Cambridge Graphene Sensor |

|---|---|---|

| Minimum feature size | ~2,000–5,000 µm | ~200 µm |

| Force detection capability | Millinewton range | Detects a grain of sand |

| Force dimensionality | Normal force only (most) | Full 3D vector (normal + shear) |

| Slip detection | Post-slip (reactive) | Pre-slip (predictive) |

| Manufacturing complexity | High (optics or rigid structures) | Soft composite, no optics |

| Scalability target | Limited | Below 50 µm (future) |

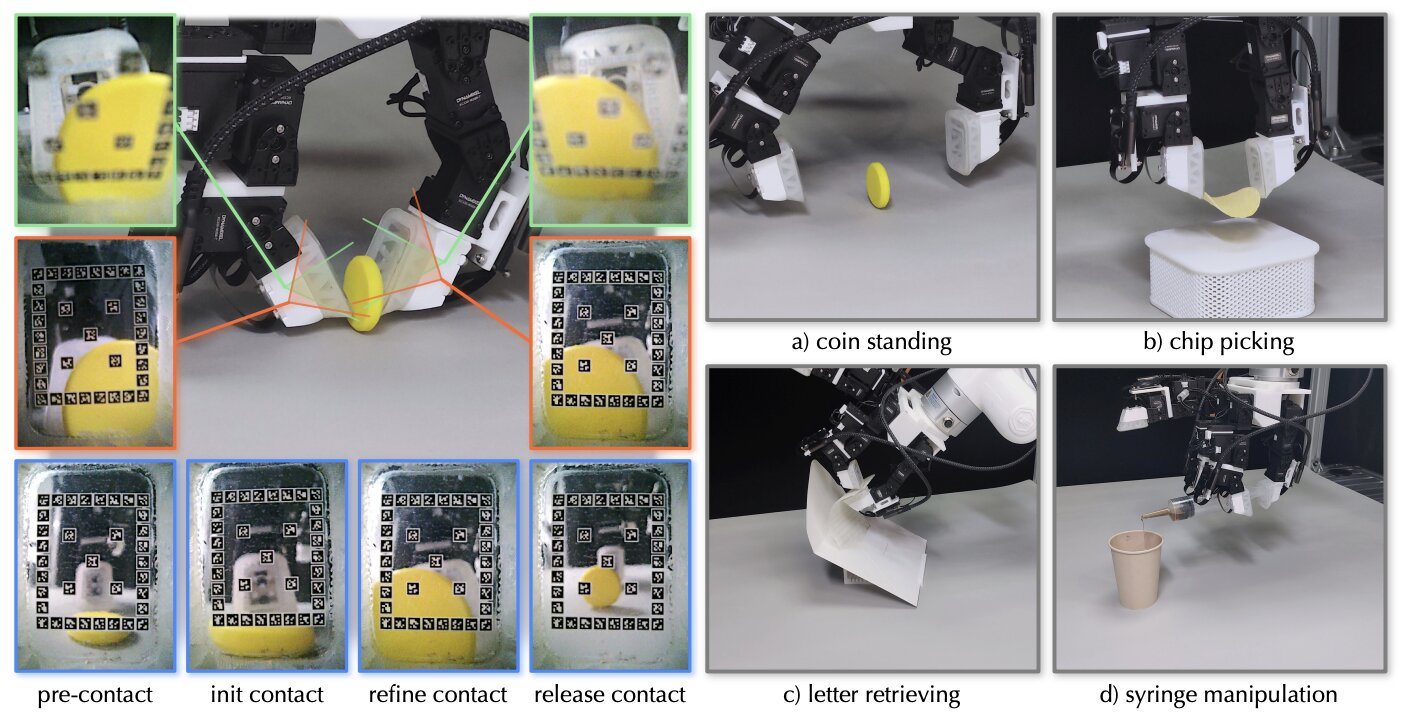

The sensor was validated in robotic gripper demonstrations, where it enabled robots to grasp thin paper tubes — objects that crush under any appreciable excess force — without damage. That kind of task requires sub-Newton force control calibrated in real time. Conventional sensors, which rely on pre-programmed object property assumptions, cannot do this adaptively.

What This Means for Humanoid Robots and Cobots

This sensor doesn't solve humanoid dexterity on its own — but it addresses the most stubborn hardware bottleneck in the stack. Vision-based manipulation, the current fallback approach used by most humanoid platforms, has fundamental physical limits. Camera latency, occlusion during contact, and the inability to sense internal grip forces mean that even the best vision-language-action models are flying partially blind the moment fingertips touch an object.

A tactile skin with predictive slip detection and 3D force resolution changes the feedback loop entirely. Instead of reacting to a drop after it happens, a robot can sense the vector shift indicating imminent slip and apply corrective torque in the same control cycle. For tasks like folding laundry, handling glassware, assembling small components, or assisting patients in healthcare settings, that difference is the line between deployable and not.

The miniaturisation roadmap matters here too. The team reports a pathway to sensor features below 50 micrometres — approaching the mechanoreceptor density of actual human skin — with potential integration of temperature and humidity sensing in future iterations. That trajectory places this work on a credible path toward full artificial skin for humanoid hands, not just isolated fingertip sensors.

For collaborative robot (cobot) applications, the implications are similarly significant. Force-sensitive manipulation is already a selling point for platforms like the Universal Robots UR-series and FANUC CRX line, but current implementations detect aggregate wrist force, not localised tactile events at the contact surface. Sensors like this one could enable per-finger, per-contact-point data at the cobot gripper level. If you're evaluating used cobots for sale for assembly or inspection tasks, this is the capability direction to watch.

What This Means for Robotics Buyers and Developers

For humanoid robot developers and buyers, this research signals that tactile sensing is moving from an academic curiosity to a near-term hardware component. A patent has been filed through Cambridge Enterprise, meaning commercialisation is an active objective, not a speculative outcome. No licensing or commercial timeline has been disclosed, but the involvement of ARIA (the UK's Advanced Research and Invention Agency) suggests production-scale development is in scope.

For cobot and industrial gripper integrators, the 3D force vector + slip detection combination is immediately relevant to any precision assembly, medical device handling, or food processing application where grasp control currently requires custom fixturing or slow, conservative motion profiles.

For prosthetics developers, the paper explicitly flags tactile feedback for advanced artificial limbs as a direct application pathway. The same miniaturised, skin-like sensing that benefits robot hands could restore meaningful tactile feedback to prosthetic hand users — a significant secondary market for this technology.

The research was supported by the Royal Society, the Henry Royce Institute, and ARIA. The paper — Multiscale-structured miniaturized 3D force sensors — is published in Nature Materials (2026). For teams evaluating humanoid robots on Botmarket, tactile sensing capability is worth adding to any hardware evaluation rubric right now.

Frequently Asked Questions

The sensor is a soft, flexible composite of graphene, liquid metal microdroplets, and nickel particles shaped into 200-micrometre pyramidal microstructures on a silicone substrate. It detects normal force, shear force, 3D force vectors, surface texture, and object slip simultaneously — capabilities that closely mirror the multidimensional sensing of human fingertips.

How does this sensor compare to existing robot tactile sensors?

According to the Nature Materials paper, the Cambridge sensor improves on current flexible tactile sensors by roughly an order of magnitude in both minimum detectable force and spatial resolution. It also adds predictive slip detection and 3D force vector reconstruction — capabilities that most commercial sensors either lack entirely or approximate poorly.

When will this graphene tactile sensor be available in commercial robots?

No commercial release date has been announced. A patent application has been filed through Cambridge Enterprise, the University of Cambridge's commercialisation arm, indicating active pursuit of licensing or spin-out. Supported by ARIA, the technology appears targeted at production-scale development, but typical timelines from academic patent filing to commercial deployment range from 3–7 years for sensor hardware.

Why does slip detection matter for humanoid robot dexterity?

Slip detection — specifically predictive slip detection, which identifies the onset of movement before a grip fails — allows a robot to apply corrective force in real time rather than reacting after an object has already dropped. Without it, robots must use excessive grip force as a safety buffer, which prevents handling fragile or deformable objects. This is a direct bottleneck for humanoid platforms attempting unstructured manipulation tasks.

Could this sensor be used in prosthetic hands?

Yes. The Cambridge researchers explicitly identify advanced prosthetics as an application pathway. The same miniaturised 3D force sensing that benefits robot grippers could restore tactile feedback to prosthetic limb users, improving grip control, safety awareness, and user confidence during object interaction.

What are the next development steps for this technology?

The team's stated roadmap includes miniaturising sensors below 50 micrometres — approaching the mechanoreceptor density of human skin — and integrating temperature and humidity sensing into future versions, moving toward a fully multimodal artificial skin rather than a force-only device.

The graphene tactile sensor from Cambridge represents the most technically credible step toward closing the tactile sensing gap in humanoid and collaborative robots published to date. It won't ship in the next generation of humanoid hands — but the trajectory from this paper to a production component is clearer than it's ever been.

If you're building or buying humanoid robots today, how much is tactile blindness actually costing your manipulation pipeline?

Pridružite se razpravi

Is tactile blindness the real ceiling on your robot's manipulation performance — or is it something else?