Coco Robotics has appointed a UCLA professor to lead a new physical AI research lab, using its accumulation of real-world delivery robot data as the foundation for training autonomous foundation models. The company's fleet has logged millions of miles across urban environments — a dataset scale that most robotics startups can only simulate. This positions Coco not just as a delivery operator, but as a physical AI platform company.

- What Is Coco's Physical AI Lab and Who Is Leading It?

- Why Delivery Robot Fleets Are Ideal Training Grounds

- The Data Moat: Millions of Miles and What That Unlocks

- From Teleoperation to Autonomy: Coco's Strategic Shift

- What This Means for Robotics

- Frequently Asked Questions

What Is Coco's Physical AI Lab and Who Is Leading It?

Coco Robotics has established a dedicated physical AI research lab and recruited a UCLA professor to direct it. The lab's core mandate is to convert the company's accumulated fleet telemetry, sensor logs, and navigation data into foundation models capable of driving full robot autonomy — moving beyond the human-assisted teleoperation model Coco has relied on to date.

The appointment signals a deliberate shift in how Coco perceives itself. Founding a research lab with academic leadership is not a typical move for a last-mile delivery operator. It reflects an ambition to build proprietary AI systems that sit at the intersection of large-scale real-world data and learned robot behaviour — what the industry is increasingly calling physical AI (AI systems designed to understand and act within the physical world, not just process text or images).

According to TechCrunch, Coco is working toward automating its fleet using the millions of miles of operational data it has collected across real urban deployments.

Why Delivery Robot Fleets Are Ideal Training Grounds

Operational delivery fleets offer something that simulation and controlled lab environments cannot: genuine distributional diversity. Every sidewalk crack, unexpected pedestrian crossing, delivery bike cutting a corner, and rain-slicked ramp is a real training signal — not a procedurally generated approximation.

This is the core argument for why companies like Coco sit in a structurally advantaged position relative to pure-play AI labs trying to train robot foundation models. The challenge for most robotics AI researchers is the data gap: simulation is cheap but brittle when transferred to the real world (known as the sim-to-real gap, where policies trained in simulation often fail on physical hardware due to sensor noise, latency, and physical variation). Real-world data collection is expensive, slow, and operationally complex.

Coco has been collecting this data as a byproduct of running a commercial service. Every operational hour is simultaneously a revenue-generating delivery and a data-generation event. The business model funds the dataset — a structural advantage that is genuinely difficult to replicate from a standing start.

| Data Source | Scale | Diversity | Cost to Collect |

|---|---|---|---|

| Simulation (e.g. Isaac Sim) | Unlimited | Low (synthetic) | Low |

| Controlled lab collection | Limited | Low (curated) | High per hour |

| Academic robot datasets | Small (thousands of hours) | Medium | High |

| Operational fleet (Coco) | Millions of miles | High (real urban) | Near-zero marginal |

The Data Moat: Millions of Miles and What That Unlocks

Millions of miles of logged robot operation is a meaningful number. To contextualise it: a single robot covering a small urban delivery zone might accumulate 5-10 miles per operational day. Reaching millions of miles across a fleet implies years of multi-robot urban deployment, capturing an enormous variety of scenarios, seasonal conditions, and edge cases.

This scale matters because foundation models for robotics — analogous to large language models but trained on sensor data, actions, and physical outcomes rather than text — require vast, diverse datasets to generalise. The brittleness of current robot AI systems is largely a data problem. Models trained on narrow datasets fail when encountering novel situations; models trained on diverse, real-world data at scale are dramatically more robust.

Coco's dataset likely includes:

- Visual and depth sensor streams from urban navigation across multiple cities and seasons

- Motor command and feedback logs capturing how the robot responded to thousands of distinct terrain and obstacle scenarios

- Human teleoperator interventions — critically, these label the exact moments where autonomous systems were insufficient, providing high-signal training targets for autonomy improvement

- Outcome data — successful deliveries, failed navigations, near-miss events — giving the model a reward signal grounded in operational reality

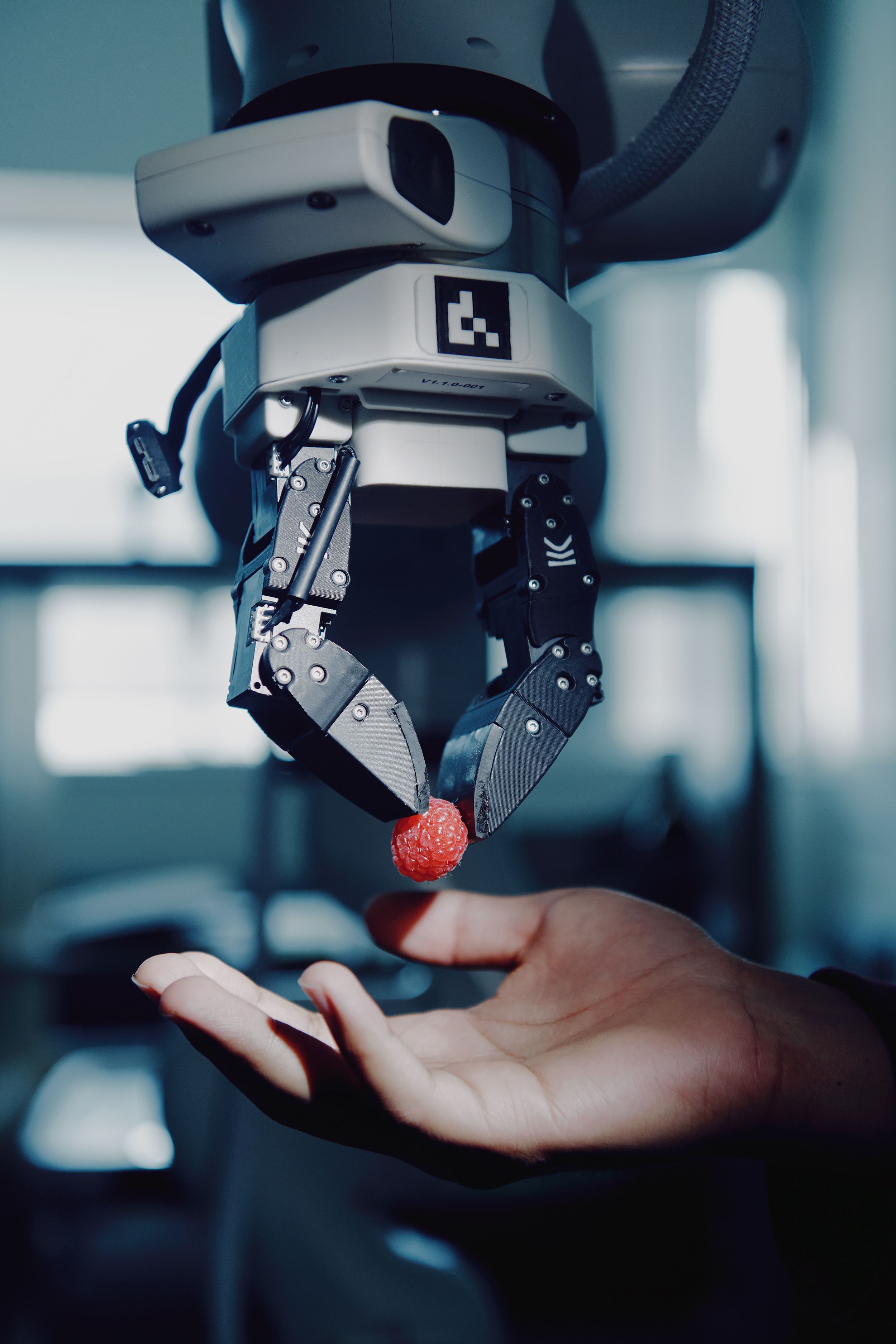

The teleoperator intervention logs deserve particular attention. Every time a remote human operator took control of a Coco robot, that event implicitly marked a hard problem — a situation the existing autonomy stack could not handle. This creates a naturally curated dataset of difficult cases, which are precisely the training examples that move a model from 90% autonomous to 99% autonomous. That last 9% is where the commercial value lives.

From Teleoperation to Autonomy: Coco's Strategic Shift

Coco's current operating model uses remote human teleoperators to assist robots when autonomous navigation fails — a common hybrid approach in last-mile delivery robotics. It reduces the cost of autonomy errors while still deploying physical robots at commercial scale. Companies including Starship Technologies and Kiwibot have used variations of this model.

The strategic tension is clear: teleoperation is a bridging mechanism, not a destination. Human operator costs create a ceiling on unit economics. Full or near-full autonomy is required for the business model to scale efficiently, which is exactly why building the physical AI lab now makes sense. Coco has the operational infrastructure, the deployment footprint, and now — with the UCLA lab appointment — the research capability to attempt the transition systematically.

This trajectory mirrors what happened in autonomous vehicles: years of fleet operation at supervised scale, generating data that eventually trained the systems capable of removing the safety driver. The timeline compression in sidewalk robotics may be faster, given the lower speeds and more constrained operational domain compared to highway driving.

What This Means for Robotics

For robotics developers and researchers, Coco's lab represents a case study in the data flywheel strategy: deploy robots commercially, collect real-world data at scale, use that data to improve autonomy, which enables wider deployment, which generates more data. This loop is becoming the dominant competitive dynamic in physical AI — and it heavily favours companies that achieved early operational scale.

For the broader delivery robotics sector, this move raises the bar for what constitutes a credible autonomy roadmap. Investors and enterprise customers will increasingly ask: where is your training data coming from, and at what scale?

For buyers considering delivery robots, the autonomy gap between platforms is real and widening. Robots backed by large real-world datasets will close the teleoperation dependency faster than those relying primarily on simulation. When evaluating platforms, ask vendors directly: what is your real-world operational mileage, and how does your data pipeline feed back into your autonomy stack?

If you're exploring autonomous mobile robots for logistics or last-mile applications, browse industrial and mobile robots on Botmarket to compare current-generation platforms across autonomy capabilities and price points.

Frequently Asked Questions

Physical AI refers to AI systems designed to perceive, reason about, and act within the physical world — contrasted with language or vision models that process digital inputs. In robotics, physical AI encompasses navigation, manipulation, and embodied decision-making trained on sensor data from real-world robot deployments rather than text or image datasets.

How many miles of data has Coco Robotics collected? Coco Robotics has publicly referenced millions of miles of real-world delivery robot operation across its fleet. The exact figure has not been disclosed, but this scale represents years of multi-robot urban deployment across sidewalk environments in multiple cities — substantially larger than most academic robotics datasets.

What does a UCLA professor bring to a robotics company's AI lab? Academic lab directors typically contribute expertise in machine learning architectures, access to graduate researcher pipelines, and credibility in the research community that aids in talent recruitment. For a physical AI lab specifically, robotics faculty research often covers areas like imitation learning, reinforcement learning, and robot foundation models that are directly applicable to fleet autonomy.

Why do teleoperator intervention logs matter for training autonomous robots? Each teleoperator intervention marks a moment where the robot's autonomous system failed or was insufficient. These events effectively label the hardest navigation and decision problems in the dataset — exactly the edge cases that must be solved to achieve high-reliability autonomy. High-signal difficult-case data is disproportionately valuable for improving model robustness compared to routine successful navigations.

How does Coco's approach compare to simulation-based robot training? Simulation allows unlimited data generation at low cost but suffers from the sim-to-real gap — policies that work in simulation often degrade on physical hardware due to sensor noise, latency, and physical variation. Coco's real-world fleet data bypasses this gap entirely, though it is more limited in the types of scenarios that can be captured compared to a fully controllable simulation environment.

Coco Robotics is executing a textbook physical AI pivot: convert operational scale into a data asset, build the research infrastructure to exploit it, and use the resulting autonomy improvements to reshape unit economics. The delivery robot fleet was always the means; the foundation model may prove to be the end.

Does your robotics autonomy strategy depend on real-world data collection, or are you betting on simulation — and why?

Приєднуйтесь до обговорення

Does your robotics autonomy strategy depend on real-world fleet data, or are you betting on simulation?